Update README.md

Browse files

README.md

CHANGED

|

@@ -23,11 +23,11 @@ base_model:

|

|

| 23 |

|

| 24 |

## Model Summary

|

| 25 |

|

| 26 |

-

QED-Nano is a 4B parameter model explicitly post-trained to strengthen

|

| 27 |

|

| 28 |

|

| 29 |

|

| 30 |

-

QED-Nano is

|

| 31 |

|

| 32 |

## How to use

|

| 33 |

|

|

@@ -90,13 +90,6 @@ In this section, we report the evaluation results of QED-Nano. All evaluations a

|

|

| 90 |

|

| 91 |

[ADD TABLE]

|

| 92 |

|

| 93 |

-

## Training

|

| 94 |

-

|

| 95 |

-

### Model

|

| 96 |

-

|

| 97 |

-

- **Architecture:** Transformer decoder

|

| 98 |

-

- **Precision:** bfloat16

|

| 99 |

-

|

| 100 |

|

| 101 |

## Limitations

|

| 102 |

|

|

@@ -105,15 +98,22 @@ QED-Nano is a domain-specific model that is designed for one thing and one thing

|

|

| 105 |

## License

|

| 106 |

[Apache 2.0](https://www.apache.org/licenses/LICENSE-2.0)

|

| 107 |

|

| 108 |

-

##

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 109 |

|

| 110 |

-

|

| 111 |

|

| 112 |

-

|

| 113 |

-

@misc{qwed_nano2026,

|

| 114 |

-

title={{QED-Nano: Solving Olympiad Math at Gemini Level with a 4B Model}},

|

| 115 |

-

author={Setlur, Amrith and Dekoninck, Jasper and Qu, Yuxiao and Wu, Ian and Li, Jia and Beeching, Edward and Tunstall, Lewis and Kumar, Aviral},

|

| 116 |

-

year={2026},

|

| 117 |

-

howpublished={\url{https://huggingface.co/blog/smollm3}}

|

| 118 |

-

}

|

| 119 |

-

```

|

|

|

|

| 23 |

|

| 24 |

## Model Summary

|

| 25 |

|

| 26 |

+

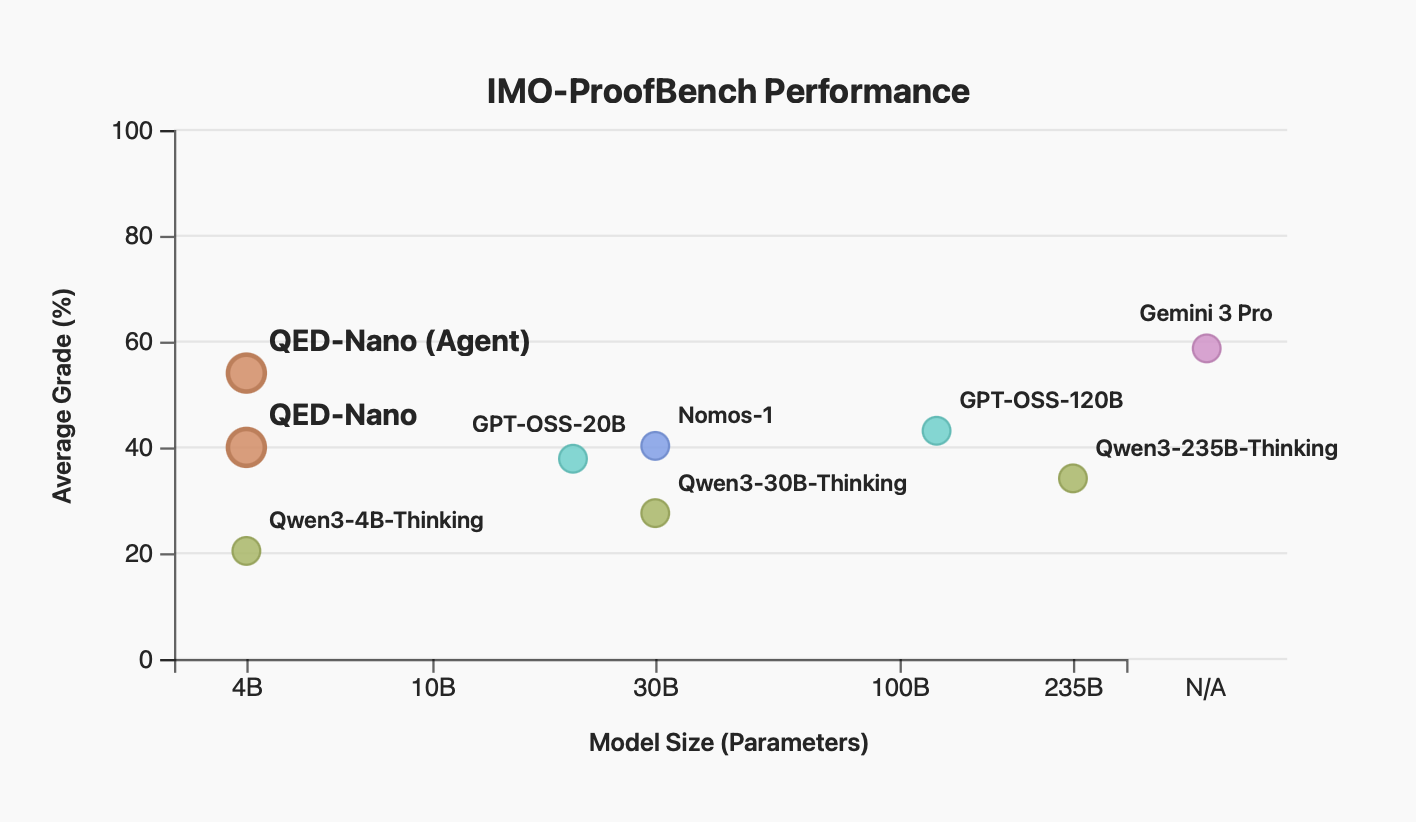

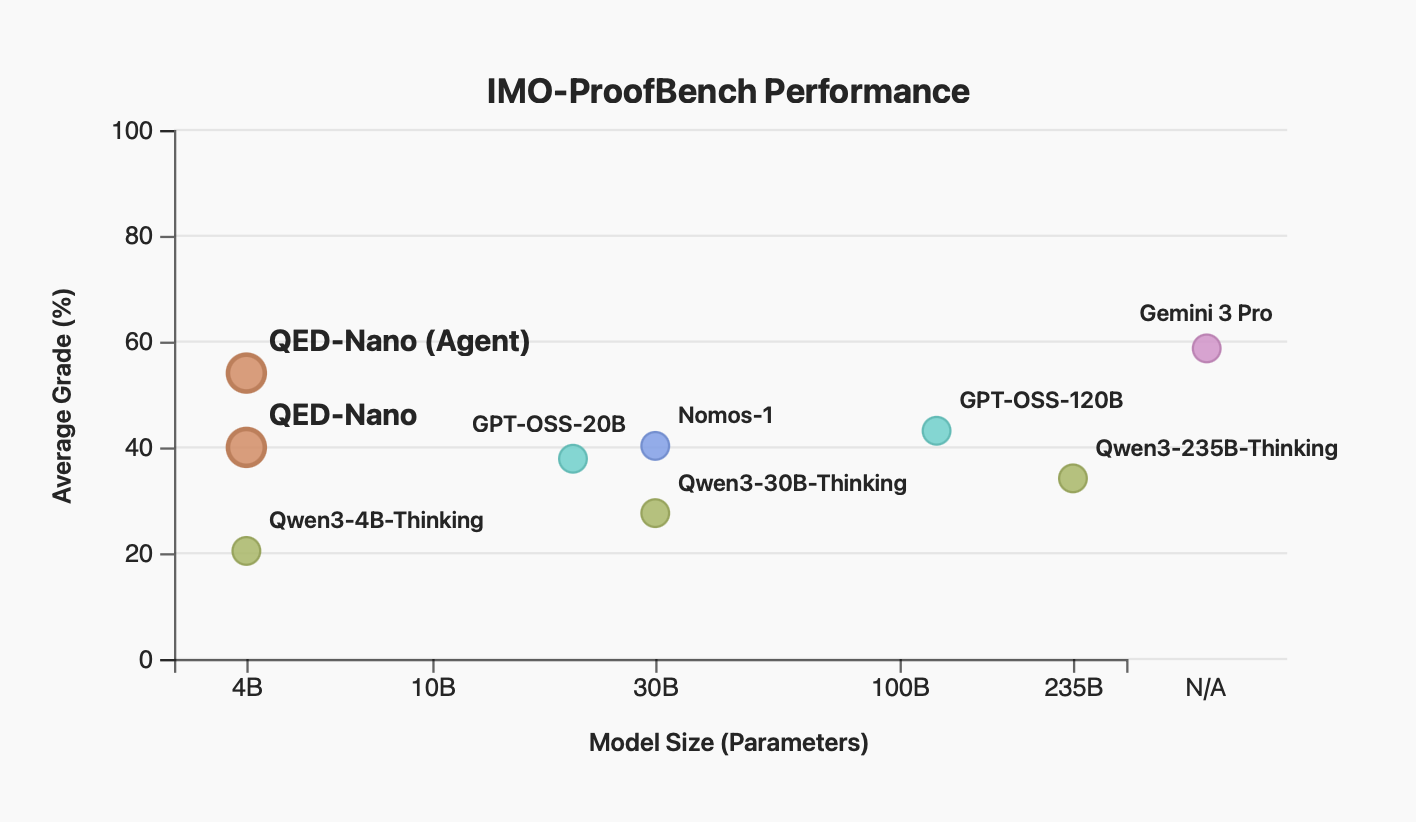

QED-Nano is a 4B parameter model explicitly post-trained to strengthen its proof-writing capabilities. Despite its small size, QED-Nano achieves an impressive 40% score on the challenging IMO-ProofBench benchmark (+20% over the Qwen3 base model), matching the performance of [GPT-OSS-120B](https://huggingface.co/openai/gpt-oss-120b) from OpenAI. With an agent scaffold that scales inference-time compute to over 1M tokens per problem, QED-Nano approaches the performance of Gemini-3-Pro:

|

| 27 |

|

| 28 |

|

| 29 |

|

| 30 |

+

QED-Nano is based on [Qwen/Qwen3-4B-Thinking-2507](https://huggingface.co/Qwen/Qwen3-4B-Thinking-2507), and was post-trained via a combination of supervised fine-tuning and [reinforcement learning with a reasoning cache](https://huggingface.co/papers/2602.03773) on a mixture of Olympiads proof problems from various public sources.

|

| 31 |

|

| 32 |

## How to use

|

| 33 |

|

|

|

|

| 90 |

|

| 91 |

[ADD TABLE]

|

| 92 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 93 |

|

| 94 |

## Limitations

|

| 95 |

|

|

|

|

| 98 |

## License

|

| 99 |

[Apache 2.0](https://www.apache.org/licenses/LICENSE-2.0)

|

| 100 |

|

| 101 |

+

## Acknowledgements

|

| 102 |

+

|

| 103 |

+

QED-Nano is a joint collaboration between the research teams at CMU, ETH Zürich, Numina, and Hugging Face. Below is a list of the individual contributors and their affiliations:

|

| 104 |

+

|

| 105 |

+

### CMU

|

| 106 |

+

|

| 107 |

+

Amrith Setlur, Yuxiao Qu, Ian Wu, and Aviral Kumar

|

| 108 |

+

|

| 109 |

+

### ETH Zürich

|

| 110 |

+

|

| 111 |

+

Jasper Dekoninck

|

| 112 |

+

|

| 113 |

+

### Numina

|

| 114 |

+

|

| 115 |

+

Jia Li

|

| 116 |

|

| 117 |

+

### Hugging Face

|

| 118 |

|

| 119 |

+

Edward Beeching and Lewis Tunstall

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|