Hello

Browse files- ComputeAgent/ComputeAgent.png +0 -0

- ComputeAgent/basic_agent_graph.png +0 -0

- ComputeAgent/chains/tool_result_chain.py +240 -0

- ComputeAgent/compute_agent_graph.png +0 -0

- ComputeAgent/graph/__init__.py +0 -0

- ComputeAgent/graph/basic_agent_graph.png +0 -0

- ComputeAgent/graph/graph.py +411 -0

- ComputeAgent/graph/graph_ReAct.py +331 -0

- ComputeAgent/graph/graph_deploy.py +363 -0

- ComputeAgent/graph/state.py +84 -0

- ComputeAgent/hivenet.jpg +0 -0

- ComputeAgent/main.py +284 -0

- ComputeAgent/models/__init__.py +0 -0

- ComputeAgent/models/doc.py +55 -0

- ComputeAgent/models/model_manager.py +100 -0

- ComputeAgent/models/model_router.py +146 -0

- ComputeAgent/nodes/ReAct/__init__.py +58 -0

- ComputeAgent/nodes/ReAct/agent_reasoning_node.py +399 -0

- ComputeAgent/nodes/ReAct/auto_approval_node.py +81 -0

- ComputeAgent/nodes/ReAct/decision_functions.py +135 -0

- ComputeAgent/nodes/ReAct/generate_node.py +510 -0

- ComputeAgent/nodes/ReAct/human_approval_node.py +284 -0

- ComputeAgent/nodes/ReAct/tool_execution_node.py +190 -0

- ComputeAgent/nodes/ReAct/tool_rejection_exit_node.py +93 -0

- ComputeAgent/nodes/ReAct_DeployModel/__init__.py +13 -0

- ComputeAgent/nodes/ReAct_DeployModel/capacity_approval.py +183 -0

- ComputeAgent/nodes/ReAct_DeployModel/capacity_estimation.py +387 -0

- ComputeAgent/nodes/ReAct_DeployModel/extract_model_info.py +291 -0

- ComputeAgent/nodes/ReAct_DeployModel/generate_additional_info.py +83 -0

- ComputeAgent/nodes/__init__.py +0 -0

- ComputeAgent/routers/compute_agent_HITL.py +590 -0

- ComputeAgent/vllm_engine_args.py +325 -0

- Compute_MCP/api_data_structure.py +398 -0

- Compute_MCP/main.py +16 -0

- Compute_MCP/tools.py +96 -0

- Compute_MCP/utils.py +26 -0

- Dockerfile +29 -0

- Gradio_interface.py +1374 -0

- README.md +12 -4

- constant.py +195 -0

- logging_setup.py +73 -0

- requirements.txt +21 -0

- run.sh +21 -0

ComputeAgent/ComputeAgent.png

ADDED

|

ComputeAgent/basic_agent_graph.png

ADDED

|

ComputeAgent/chains/tool_result_chain.py

ADDED

|

@@ -0,0 +1,240 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

Tool Result Chain Module for ReAct Workflow

|

| 3 |

+

|

| 4 |

+

This module implements the ToolResultChain class, which serves as a specialized

|

| 5 |

+

response generation component within the ReAct (Reasoning and Acting) workflow

|

| 6 |

+

for synthesizing responses from non-researcher tool execution results.

|

| 7 |

+

|

| 8 |

+

The ToolResultChain provides professional formatting and comprehensive response

|

| 9 |

+

generation for various tool outputs (math calculations, file operations, API calls,

|

| 10 |

+

etc.) while maintaining the same quality standards as ResearcherChain and DirectAnswerChain.

|

| 11 |

+

|

| 12 |

+

The ToolResultChain is used when the ReAct workflow has executed tools other than

|

| 13 |

+

the researcher tool and needs to create a well-formatted, contextual response

|

| 14 |

+

that integrates tool results with conversation memory and user intent.

|

| 15 |

+

|

| 16 |

+

Key Features:

|

| 17 |

+

- Tool result synthesis with professional formatting

|

| 18 |

+

- Memory context integration for personalized responses

|

| 19 |

+

- Comprehensive formatting consistent with other chains

|

| 20 |

+

- Support for multiple tool result types (JSON, text, structured data)

|

| 21 |

+

- Timezone-aware timestamp integration

|

| 22 |

+

- Professional markdown structure for consistency

|

| 23 |

+

- Integration with HiveGPT system prompts for unified behavior

|

| 24 |

+

|

| 25 |

+

Author: HiveNetCode

|

| 26 |

+

License: Private

|

| 27 |

+

"""

|

| 28 |

+

|

| 29 |

+

from datetime import datetime

|

| 30 |

+

from typing import Optional, List, Dict, Any

|

| 31 |

+

from zoneinfo import ZoneInfo

|

| 32 |

+

|

| 33 |

+

from langchain_core.prompts import ChatPromptTemplate

|

| 34 |

+

from langchain_core.output_parsers import StrOutputParser

|

| 35 |

+

from langchain_openai import ChatOpenAI

|

| 36 |

+

|

| 37 |

+

from constant import Constants

|

| 38 |

+

|

| 39 |

+

class ToolResultChain:

|

| 40 |

+

"""

|

| 41 |

+

Specialized chain optimized for tool result synthesis within ReAct workflow.

|

| 42 |

+

|

| 43 |

+

This class implements a tool result-based response generation system specifically

|

| 44 |

+

designed for the ReAct (Reasoning and Acting) workflow pattern. It provides responses

|

| 45 |

+

that synthesize and contextualize tool execution results into comprehensive,

|

| 46 |

+

user-friendly answers that maintain professional presentation standards.

|

| 47 |

+

|

| 48 |

+

The ToolResultChain handles various tool output types and ensures:

|

| 49 |

+

- Professional formatting consistent with other chains

|

| 50 |

+

- Integration of tool results with user context and intent

|

| 51 |

+

- Memory-aware response generation

|

| 52 |

+

- Comprehensive explanations that go beyond raw tool output

|

| 53 |

+

|

| 54 |

+

This chain is typically used when the ReAct workflow has executed tools like:

|

| 55 |

+

- Mathematical calculations

|

| 56 |

+

- File operations

|

| 57 |

+

- API calls

|

| 58 |

+

- Data processing tools

|

| 59 |

+

- System utilities

|

| 60 |

+

And needs to present the results in a user-friendly, contextual manner.

|

| 61 |

+

"""

|

| 62 |

+

|

| 63 |

+

def __init__(self, llm: ChatOpenAI):

|

| 64 |

+

"""

|

| 65 |

+

Initialize the ToolResultChain with language model and prompt configuration.

|

| 66 |

+

|

| 67 |

+

Args:

|

| 68 |

+

llm: ChatOpenAI instance configured for response generation.

|

| 69 |

+

Should be the same model type used in other chains for consistency.

|

| 70 |

+

"""

|

| 71 |

+

self.llm = llm

|

| 72 |

+

|

| 73 |

+

# Build the system prompt for tool result synthesis

|

| 74 |

+

tool_result_system_prompt = self._build_tool_result_system_prompt()

|

| 75 |

+

|

| 76 |

+

# Create the prompt template for tool result responses

|

| 77 |

+

self.prompt = ChatPromptTemplate.from_messages([

|

| 78 |

+

("system", tool_result_system_prompt),

|

| 79 |

+

("human", self._get_human_message_template())

|

| 80 |

+

])

|

| 81 |

+

|

| 82 |

+

# Build the complete processing chain

|

| 83 |

+

self.chain = self.prompt | self.llm | StrOutputParser()

|

| 84 |

+

|

| 85 |

+

def _build_tool_result_system_prompt(self) -> str:

|

| 86 |

+

"""

|

| 87 |

+

Construct the complete system prompt for tool result synthesis.

|

| 88 |

+

|

| 89 |

+

Returns:

|

| 90 |

+

Complete system prompt combining HiveGPT base behavior with tool result instructions

|

| 91 |

+

"""

|

| 92 |

+

return Constants.GENERAL_SYSTEM_PROMPT + r"""

|

| 93 |

+

|

| 94 |

+

## TOOL RESULT SYNTHESIS INSTRUCTIONS

|

| 95 |

+

**YOU ARE SYNTHESIZING AND PRESENTING TOOL EXECUTION RESULTS.**

|

| 96 |

+

- **ANALYZE** the provided tool results and understand what was accomplished.

|

| 97 |

+

- **CONTEXTUALIZE** the results within the user's original query and intent.

|

| 98 |

+

- **PROVIDE** comprehensive explanations that go beyond just presenting raw data.

|

| 99 |

+

- **INTEGRATE** conversation context to make responses personalized and relevant.

|

| 100 |

+

- **FORMAT** responses with appropriate markdown structure for professional presentation.

|

| 101 |

+

|

| 102 |

+

### Response Quality Guidelines

|

| 103 |

+

- **Explain what was done**: Clearly describe what tool(s) were executed and why.

|

| 104 |

+

- **Present results clearly**: Format tool outputs in a user-friendly way.

|

| 105 |

+

- **Provide context**: Explain the significance or implications of the results.

|

| 106 |

+

- **Answer the user's intent**: Address the underlying question, not just the tool output.

|

| 107 |

+

- **Use professional formatting**: Employ headers, lists, code blocks as appropriate.

|

| 108 |

+

|

| 109 |

+

### Tool Result Processing

|

| 110 |

+

- **Parse and understand** different tool output formats (JSON, text, structured data).

|

| 111 |

+

- **Extract key information** and present it in an organized manner.

|

| 112 |

+

- **Explain technical details** in terms accessible to the user.

|

| 113 |

+

- **Connect results** to the user's original question or request.

|

| 114 |

+

- **Provide next steps** or additional insights when relevant.

|

| 115 |

+

|

| 116 |

+

### Professional Presentation Standards

|

| 117 |

+

- Match the formatting quality and structure used in document-based responses

|

| 118 |

+

- Provide explanations that demonstrate understanding of the tool's purpose

|

| 119 |

+

- Include practical context that helps the user understand the results

|

| 120 |

+

- Maintain consistency with HiveGPT's helpful and informative persona

|

| 121 |

+

- Use clear, professional language appropriate for the context

|

| 122 |

+

- **NEVER include technical identifiers, call IDs, or internal system references in your response**

|

| 123 |

+

- Focus on the content and meaning, not the technical implementation details

|

| 124 |

+

"""

|

| 125 |

+

|

| 126 |

+

def _get_human_message_template(self) -> str:

|

| 127 |

+

"""

|

| 128 |

+

Get the human message template for tool result synthesis.

|

| 129 |

+

|

| 130 |

+

Returns:

|

| 131 |

+

Template string for structuring tool results with user context

|

| 132 |

+

"""

|

| 133 |

+

return """**CURRENT DATE/TIME:** {currentDateTime}

|

| 134 |

+

|

| 135 |

+

**ORIGINAL USER QUERY:**

|

| 136 |

+

{query}

|

| 137 |

+

|

| 138 |

+

**TOOL EXECUTION RESULTS:**

|

| 139 |

+

{tool_results}

|

| 140 |

+

|

| 141 |

+

**CONVERSATION CONTEXT:**

|

| 142 |

+

{memory_context}

|

| 143 |

+

|

| 144 |

+

Please synthesize the tool execution results into a comprehensive, well-formatted response that addresses the user's original query. Explain what was accomplished, present the results clearly, and provide context that helps the user understand the significance of the results."""

|

| 145 |

+

|

| 146 |

+

async def ainvoke(self, query: str, tool_results: List[Any], memory_context: Optional[str] = None) -> str:

|

| 147 |

+

"""

|

| 148 |

+

Generate a comprehensive response by synthesizing tool execution results.

|

| 149 |

+

|

| 150 |

+

This method processes tool execution results and creates a well-formatted,

|

| 151 |

+

contextual response that integrates the results with the user's original

|

| 152 |

+

intent and conversation context.

|

| 153 |

+

|

| 154 |

+

The response generation process:

|

| 155 |

+

1. Analyzes and formats tool results for presentation

|

| 156 |

+

2. Integrates conversation context for personalization

|

| 157 |

+

3. Synthesizes results into a comprehensive explanation

|

| 158 |

+

4. Applies professional formatting for clarity

|

| 159 |

+

5. Ensures the response addresses the user's underlying intent

|

| 160 |

+

|

| 161 |

+

Args:

|

| 162 |

+

query: The user's original question or request that triggered tool execution.

|

| 163 |

+

Used to ensure the response addresses the user's actual intent.

|

| 164 |

+

tool_results: List of tool execution results from various tools. Can include

|

| 165 |

+

different formats (JSON strings, text, structured objects).

|

| 166 |

+

memory_context: Optional conversation context to personalize the response

|

| 167 |

+

and maintain conversation continuity.

|

| 168 |

+

|

| 169 |

+

Returns:

|

| 170 |

+

A comprehensive, well-formatted response that synthesizes tool results

|

| 171 |

+

into a user-friendly explanation with professional presentation.

|

| 172 |

+

|

| 173 |

+

Raises:

|

| 174 |

+

Exception: If response generation fails, returns an error message with

|

| 175 |

+

tool results preserved for debugging and transparency.

|

| 176 |

+

|

| 177 |

+

Example:

|

| 178 |

+

>>> chain = ToolResultChain(llm)

|

| 179 |

+

>>> tool_results = [{"status": "success", "result": 42}]

|

| 180 |

+

>>> response = await chain.ainvoke("Calculate 6*7", tool_results)

|

| 181 |

+

>>> print(response) # Comprehensive formatted response explaining the calculation

|

| 182 |

+

"""

|

| 183 |

+

try:

|

| 184 |

+

# Get current timestamp for temporal context

|

| 185 |

+

current_time = datetime.now(ZoneInfo("Europe/Rome")).strftime("%Y-%m-%d %H:%M:%S %Z")

|

| 186 |

+

|

| 187 |

+

# Format tool results for presentation

|

| 188 |

+

formatted_tool_results = self._format_tool_results(tool_results)

|

| 189 |

+

|

| 190 |

+

# Prepare memory context

|

| 191 |

+

context_text = memory_context if memory_context else "No previous conversation context available."

|

| 192 |

+

|

| 193 |

+

# Execute the tool result synthesis chain

|

| 194 |

+

result = await self.chain.ainvoke({

|

| 195 |

+

"query": query,

|

| 196 |

+

"tool_results": formatted_tool_results,

|

| 197 |

+

"memory_context": context_text,

|

| 198 |

+

"currentDateTime": current_time

|

| 199 |

+

})

|

| 200 |

+

|

| 201 |

+

return result

|

| 202 |

+

|

| 203 |

+

except Exception as e:

|

| 204 |

+

# Provide comprehensive error handling while preserving tool results

|

| 205 |

+

error_message = (

|

| 206 |

+

f"I was able to execute the requested tools, but encountered an issue synthesizing the response: {str(e)}\n\n"

|

| 207 |

+

f"Tool execution results: {self._format_tool_results(tool_results)}\n\n"

|

| 208 |

+

f"Your original query: {query}\n\n"

|

| 209 |

+

f"Please try rephrasing your question or contact support if the issue persists."

|

| 210 |

+

)

|

| 211 |

+

return error_message

|

| 212 |

+

|

| 213 |

+

def _format_tool_results(self, tool_results: List[Any]) -> str:

|

| 214 |

+

"""

|

| 215 |

+

Format tool results for presentation in the prompt.

|

| 216 |

+

|

| 217 |

+

Args:

|

| 218 |

+

tool_results: List of tool execution results in various formats

|

| 219 |

+

|

| 220 |

+

Returns:

|

| 221 |

+

Formatted string representation of tool results without technical IDs

|

| 222 |

+

"""

|

| 223 |

+

if not tool_results:

|

| 224 |

+

return "No tool results available."

|

| 225 |

+

|

| 226 |

+

formatted_results = []

|

| 227 |

+

|

| 228 |

+

for i, result in enumerate(tool_results, 1):

|

| 229 |

+

if hasattr(result, 'content'):

|

| 230 |

+

# Tool message with content attribute - exclude tool_call_id from user-facing content

|

| 231 |

+

content = result.content

|

| 232 |

+

formatted_results.append(f"Tool {i} Result:\n{content}")

|

| 233 |

+

elif isinstance(result, dict):

|

| 234 |

+

# Dictionary result

|

| 235 |

+

formatted_results.append(f"Tool {i} Result:\n{str(result)}")

|

| 236 |

+

else:

|

| 237 |

+

# Other formats

|

| 238 |

+

formatted_results.append(f"Tool {i} Result:\n{str(result)}")

|

| 239 |

+

|

| 240 |

+

return "\n\n".join(formatted_results)

|

ComputeAgent/compute_agent_graph.png

ADDED

|

ComputeAgent/graph/__init__.py

ADDED

|

File without changes

|

ComputeAgent/graph/basic_agent_graph.png

ADDED

|

ComputeAgent/graph/graph.py

ADDED

|

@@ -0,0 +1,411 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

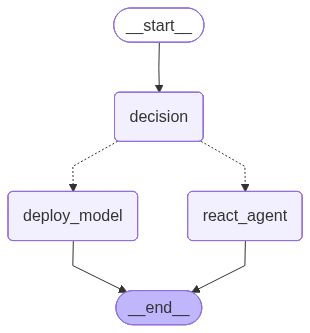

+

"""

|

| 2 |

+

Basic Agent Main Graph Module (FastAPI Compatible - Minimal Changes)

|

| 3 |

+

|

| 4 |

+

This module implements the core workflow graph for the Basic Agent system.

|

| 5 |

+

It defines the agent's decision-making flow between model deployment and

|

| 6 |

+

React-based compute workflows.

|

| 7 |

+

|

| 8 |

+

CHANGES FROM ORIGINAL:

|

| 9 |

+

- __init__ now accepts optional tools and llm parameters

|

| 10 |

+

- Added async create() classmethod for FastAPI

|

| 11 |

+

- Fully backwards compatible with existing CLI code

|

| 12 |

+

|

| 13 |

+

Author: Your Name

|

| 14 |

+

License: Private

|

| 15 |

+

"""

|

| 16 |

+

|

| 17 |

+

import asyncio

|

| 18 |

+

from typing import Dict, Any, List, Optional

|

| 19 |

+

import uuid

|

| 20 |

+

import json

|

| 21 |

+

import logging

|

| 22 |

+

|

| 23 |

+

from langgraph.graph import StateGraph, END, START

|

| 24 |

+

from typing_extensions import TypedDict

|

| 25 |

+

from constant import Constants

|

| 26 |

+

|

| 27 |

+

# Import node functions (to be implemented in separate files)

|

| 28 |

+

from langgraph.checkpoint.memory import MemorySaver

|

| 29 |

+

from graph.graph_deploy import DeployModelAgent

|

| 30 |

+

from graph.graph_ReAct import ReactWorkflow

|

| 31 |

+

from models.model_manager import ModelManager

|

| 32 |

+

from langchain_core.messages import HumanMessage, SystemMessage

|

| 33 |

+

from langchain_mcp_adapters.client import MultiServerMCPClient

|

| 34 |

+

from graph.state import AgentState

|

| 35 |

+

|

| 36 |

+

# Initialize model manager for dynamic LLM loading and management

|

| 37 |

+

model_manager = ModelManager()

|

| 38 |

+

|

| 39 |

+

# Global MemorySaver (persists state across requests)

|

| 40 |

+

memory_saver = MemorySaver()

|

| 41 |

+

|

| 42 |

+

logger = logging.getLogger("ComputeAgent")

|

| 43 |

+

|

| 44 |

+

mcp_client = MultiServerMCPClient(

|

| 45 |

+

{

|

| 46 |

+

"hivecompute": {

|

| 47 |

+

"command": "python",

|

| 48 |

+

"args": ["/home/hivenet/Compute_MCP/main.py"],

|

| 49 |

+

"transport": "stdio"

|

| 50 |

+

}

|

| 51 |

+

}

|

| 52 |

+

)

|

| 53 |

+

|

| 54 |

+

class ComputeAgent:

|

| 55 |

+

"""

|

| 56 |

+

Main Compute Agent class providing AI-powered decision routing and execution.

|

| 57 |

+

|

| 58 |

+

This class orchestrates the complete agent workflow including:

|

| 59 |

+

- Decision routing between model deployment and React agent

|

| 60 |

+

- Model deployment workflow with capacity estimation and approval

|

| 61 |

+

- React agent execution with compute capabilities

|

| 62 |

+

- Error handling and state management

|

| 63 |

+

|

| 64 |

+

Attributes:

|

| 65 |

+

graph: Compiled LangGraph workflow

|

| 66 |

+

model_name: Default model name for operations

|

| 67 |

+

|

| 68 |

+

Usage:

|

| 69 |

+

# For CLI (backwards compatible):

|

| 70 |

+

agent = ComputeAgent()

|

| 71 |

+

|

| 72 |

+

# For FastAPI (async):

|

| 73 |

+

agent = await ComputeAgent.create()

|

| 74 |

+

"""

|

| 75 |

+

|

| 76 |

+

def __init__(self, tools=None, llm=None):

|

| 77 |

+

"""

|

| 78 |

+

Initialize Compute Agent with optional pre-loaded dependencies.

|

| 79 |

+

|

| 80 |

+

Args:

|

| 81 |

+

tools: Pre-loaded MCP tools (optional, will load if not provided)

|

| 82 |

+

llm: Pre-loaded LLM model (optional, will load if not provided)

|

| 83 |

+

"""

|

| 84 |

+

# If tools/llm not provided, load them synchronously (for CLI)

|

| 85 |

+

if tools is None:

|

| 86 |

+

self.tools = asyncio.run(mcp_client.get_tools())

|

| 87 |

+

else:

|

| 88 |

+

self.tools = tools

|

| 89 |

+

|

| 90 |

+

if llm is None:

|

| 91 |

+

self.llm = asyncio.run(model_manager.load_llm_model(Constants.DEFAULT_LLM_FC))

|

| 92 |

+

else:

|

| 93 |

+

self.llm = llm

|

| 94 |

+

|

| 95 |

+

self.deploy_subgraph = DeployModelAgent(llm=self.llm, react_tools=self.tools)

|

| 96 |

+

self.react_subgraph = ReactWorkflow(llm=self.llm, tools=self.tools)

|

| 97 |

+

self.graph = self._create_graph()

|

| 98 |

+

|

| 99 |

+

@classmethod

|

| 100 |

+

async def create(cls):

|

| 101 |

+

"""

|

| 102 |

+

Async factory method for creating ComputeAgent.

|

| 103 |

+

Use this in FastAPI to avoid asyncio.run() issues.

|

| 104 |

+

|

| 105 |

+

Returns:

|

| 106 |

+

Initialized ComputeAgent instance

|

| 107 |

+

"""

|

| 108 |

+

logger.info("🔧 Loading tools and LLM asynchronously...")

|

| 109 |

+

tools = await mcp_client.get_tools()

|

| 110 |

+

llm = await model_manager.load_llm_model(Constants.DEFAULT_LLM_FC)

|

| 111 |

+

# Initialize DeployModelAgent with its own tools

|

| 112 |

+

deploy_subgraph = await DeployModelAgent.create(llm=llm, custom_tools=None)

|

| 113 |

+

return cls(tools=tools, llm=llm)

|

| 114 |

+

|

| 115 |

+

async def decision_node(self, state: Dict[str, Any]) -> Dict[str, Any]:

|

| 116 |

+

"""

|

| 117 |

+

Node that handles routing decisions for the ComputeAgent workflow.

|

| 118 |

+

|

| 119 |

+

Analyzes the user query to determine whether to route to:

|

| 120 |

+

- Model deployment workflow (deploy_model)

|

| 121 |

+

- React agent workflow (react_agent)

|

| 122 |

+

|

| 123 |

+

Args:

|

| 124 |

+

state: Current agent state with memory fields

|

| 125 |

+

|

| 126 |

+

Returns:

|

| 127 |

+

Updated state with routing decision

|

| 128 |

+

"""

|

| 129 |

+

# Get user context

|

| 130 |

+

user_id = state.get("user_id", "")

|

| 131 |

+

session_id = state.get("session_id", "")

|

| 132 |

+

query = state.get("query", "")

|

| 133 |

+

|

| 134 |

+

logger.info(f"🎯 Decision node processing query for {user_id}:{session_id}")

|

| 135 |

+

|

| 136 |

+

# Build memory context for decision making

|

| 137 |

+

memory_context = ""

|

| 138 |

+

if user_id and session_id:

|

| 139 |

+

try:

|

| 140 |

+

from helpers.memory import get_memory_manager

|

| 141 |

+

memory_manager = get_memory_manager()

|

| 142 |

+

memory_context = await memory_manager.build_context_for_node(user_id, session_id, "decision")

|

| 143 |

+

if memory_context:

|

| 144 |

+

logger.info(f"🧠 Using memory context for decision routing")

|

| 145 |

+

except Exception as e:

|

| 146 |

+

logger.warning(f"⚠️ Could not load memory context for decision: {e}")

|

| 147 |

+

|

| 148 |

+

try:

|

| 149 |

+

# Create a simple LLM for decision making

|

| 150 |

+

# Load main LLM using ModelManager

|

| 151 |

+

llm = await model_manager.load_llm_model(Constants.DEFAULT_LLM_NAME)

|

| 152 |

+

|

| 153 |

+

# Create decision prompt

|

| 154 |

+

decision_system_prompt = f"""

|

| 155 |

+

You are a routing assistant for ComputeAgent. Analyze the user's query and decide which workflow to use.

|

| 156 |

+

|

| 157 |

+

Choose between:

|

| 158 |

+

1. DEPLOY_MODEL - For queries about deploy AI model from HuggingFace. In this case the user MUST specify the model card name (like meta-llama/Meta-Llama-3-70B).

|

| 159 |

+

- The user can specify the hardware capacity needed.

|

| 160 |

+

- The user can ask for model analysis, deployment steps, or capacity estimation.

|

| 161 |

+

|

| 162 |

+

2. REACT_AGENT - For all the rest of queries.

|

| 163 |

+

|

| 164 |

+

{f"Conversation Context: {memory_context}" if memory_context else "No conversation context available."}

|

| 165 |

+

|

| 166 |

+

User Query: {query}

|

| 167 |

+

|

| 168 |

+

Respond with only: "DEPLOY_MODEL" or "REACT_AGENT"

|

| 169 |

+

"""

|

| 170 |

+

|

| 171 |

+

# Get routing decision

|

| 172 |

+

decision_response = await llm.ainvoke([

|

| 173 |

+

SystemMessage(content=decision_system_prompt)

|

| 174 |

+

])

|

| 175 |

+

|

| 176 |

+

routing_decision = decision_response.content.strip().upper()

|

| 177 |

+

|

| 178 |

+

# Validate and set decision

|

| 179 |

+

if "DEPLOY_MODEL" in routing_decision:

|

| 180 |

+

agent_decision = "deploy_model"

|

| 181 |

+

logger.info(f"📦 Routing to model deployment workflow")

|

| 182 |

+

elif "REACT_AGENT" in routing_decision:

|

| 183 |

+

agent_decision = "react_agent"

|

| 184 |

+

logger.info(f"⚛️ Routing to React agent workflow")

|

| 185 |

+

else:

|

| 186 |

+

# Default fallback to React agent for general queries

|

| 187 |

+

agent_decision = "react_agent"

|

| 188 |

+

logger.warning(f"⚠️ Ambiguous routing decision '{routing_decision}', defaulting to React agent")

|

| 189 |

+

|

| 190 |

+

# Update state with decision

|

| 191 |

+

updated_state = state.copy()

|

| 192 |

+

updated_state["agent_decision"] = agent_decision

|

| 193 |

+

updated_state["current_step"] = "decision_complete"

|

| 194 |

+

|

| 195 |

+

logger.info(f"✅ Decision node complete: {agent_decision}")

|

| 196 |

+

return updated_state

|

| 197 |

+

|

| 198 |

+

except Exception as e:

|

| 199 |

+

logger.error(f"❌ Error in decision node: {e}")

|

| 200 |

+

|

| 201 |

+

# Update state with fallback decision

|

| 202 |

+

updated_state = state.copy()

|

| 203 |

+

updated_state["error"] = f"Decision error (fallback used): {str(e)}"

|

| 204 |

+

|

| 205 |

+

return updated_state

|

| 206 |

+

|

| 207 |

+

def _create_graph(self) -> StateGraph:

|

| 208 |

+

"""

|

| 209 |

+

Create and configure the Compute Agent workflow graph.

|

| 210 |

+

|

| 211 |

+

This method builds the complete workflow including:

|

| 212 |

+

1. Initial decision node - routes to deployment or React agent

|

| 213 |

+

2. Model deployment path:

|

| 214 |

+

- Fetch model card from HuggingFace

|

| 215 |

+

- Extract model information

|

| 216 |

+

- Estimate capacity requirements

|

| 217 |

+

- Human approval checkpoint

|

| 218 |

+

- Deploy model or provide info

|

| 219 |

+

3. React agent path:

|

| 220 |

+

- Execute React agent with compute MCP capabilities

|

| 221 |

+

|

| 222 |

+

Returns:

|

| 223 |

+

Compiled StateGraph ready for execution

|

| 224 |

+

"""

|

| 225 |

+

workflow = StateGraph(AgentState)

|

| 226 |

+

|

| 227 |

+

# Add decision node

|

| 228 |

+

workflow.add_node("decision", self.decision_node)

|

| 229 |

+

|

| 230 |

+

# Add model deployment workflow nodes

|

| 231 |

+

workflow.add_node("deploy_model", self.deploy_subgraph.get_compiled_graph())

|

| 232 |

+

|

| 233 |

+

# Add React agent node

|

| 234 |

+

workflow.add_node("react_agent", self.react_subgraph.get_compiled_graph())

|

| 235 |

+

|

| 236 |

+

# Set entry point

|

| 237 |

+

workflow.set_entry_point("decision")

|

| 238 |

+

|

| 239 |

+

# Add conditional edges from decision node

|

| 240 |

+

workflow.add_conditional_edges(

|

| 241 |

+

"decision",

|

| 242 |

+

lambda state: state["agent_decision"],

|

| 243 |

+

{

|

| 244 |

+

"deploy_model": "deploy_model",

|

| 245 |

+

"react_agent": "react_agent",

|

| 246 |

+

}

|

| 247 |

+

)

|

| 248 |

+

|

| 249 |

+

# Add edges to END

|

| 250 |

+

workflow.add_edge("deploy_model", END)

|

| 251 |

+

workflow.add_edge("react_agent", END)

|

| 252 |

+

|

| 253 |

+

# Compile with checkpointer

|

| 254 |

+

return workflow.compile(checkpointer=memory_saver)

|

| 255 |

+

|

| 256 |

+

def get_compiled_graph(self):

|

| 257 |

+

"""Return the compiled graph for use in FastAPI"""

|

| 258 |

+

return self.graph

|

| 259 |

+

|

| 260 |

+

def invoke(self, query: str, user_id: str = "default_user", session_id: str = "default_session") -> Dict[str, Any]:

|

| 261 |

+

"""

|

| 262 |

+

Execute the graph with a given query and memory context (synchronous wrapper for async).

|

| 263 |

+

|

| 264 |

+

Args:

|

| 265 |

+

query: User's query

|

| 266 |

+

user_id: User identifier for memory management

|

| 267 |

+

session_id: Session identifier for memory management

|

| 268 |

+

|

| 269 |

+

Returns:

|

| 270 |

+

Final result from the graph execution

|

| 271 |

+

"""

|

| 272 |

+

return asyncio.run(self.ainvoke(query, user_id, session_id))

|

| 273 |

+

|

| 274 |

+

async def ainvoke(self, query: str, user_id: str = "default_user", session_id: str = "default_session") -> Dict[str, Any]:

|

| 275 |

+

"""

|

| 276 |

+

Execute the graph with a given query and memory context (async).

|

| 277 |

+

|

| 278 |

+

Args:

|

| 279 |

+

query: User's query

|

| 280 |

+

user_id: User identifier for memory management

|

| 281 |

+

session_id: Session identifier for memory management

|

| 282 |

+

|

| 283 |

+

Returns:

|

| 284 |

+

Final result from the graph execution containing:

|

| 285 |

+

- response: Final response to user

|

| 286 |

+

- agent_decision: Which path was taken

|

| 287 |

+

- deployment_result: If deployment path was taken

|

| 288 |

+

- react_results: If React agent path was taken

|

| 289 |

+

"""

|

| 290 |

+

initial_state = {

|

| 291 |

+

"user_id": user_id,

|

| 292 |

+

"session_id": session_id,

|

| 293 |

+

"query": query,

|

| 294 |

+

"response": "",

|

| 295 |

+

"current_step": "start",

|

| 296 |

+

"agent_decision": "",

|

| 297 |

+

"deployment_approved": False,

|

| 298 |

+

"model_name": "",

|

| 299 |

+

"model_card": {},

|

| 300 |

+

"model_info": {},

|

| 301 |

+

"capacity_estimate": {},

|

| 302 |

+

"deployment_result": {},

|

| 303 |

+

"react_results": {},

|

| 304 |

+

"tool_calls": [],

|

| 305 |

+

"tool_results": [],

|

| 306 |

+

"messages": [],

|

| 307 |

+

# Approval fields for ReactWorkflow

|

| 308 |

+

"pending_tool_calls": [],

|

| 309 |

+

"approved_tool_calls": [],

|

| 310 |

+

"rejected_tool_calls": [],

|

| 311 |

+

"modified_tool_calls": [],

|

| 312 |

+

"needs_re_reasoning": False,

|

| 313 |

+

"re_reasoning_feedback": ""

|

| 314 |

+

}

|

| 315 |

+

|

| 316 |

+

# Create config with thread_id for checkpointer

|

| 317 |

+

config = {

|

| 318 |

+

"configurable": {

|

| 319 |

+

"thread_id": f"{user_id}_{session_id}"

|

| 320 |

+

}

|

| 321 |

+

}

|

| 322 |

+

|

| 323 |

+

try:

|

| 324 |

+

result = await self.graph.ainvoke(initial_state, config)

|

| 325 |

+

return result

|

| 326 |

+

|

| 327 |

+

except Exception as e:

|

| 328 |

+

logger.error(f"Error in graph execution: {e}")

|

| 329 |

+

return {

|

| 330 |

+

**initial_state,

|

| 331 |

+

"error": str(e),

|

| 332 |

+

"error_step": initial_state.get("current_step", "unknown"),

|

| 333 |

+

"response": f"An error occurred during execution: {str(e)}"

|

| 334 |

+

}

|

| 335 |

+

|

| 336 |

+

async def astream_generate_nodes(self, query: str, user_id: str = "default_user", session_id: str = "default_session"):

|

| 337 |

+

"""

|

| 338 |

+

Stream the graph execution node by node (async).

|

| 339 |

+

|

| 340 |

+

Args:

|

| 341 |

+

query: User's query

|

| 342 |

+

user_id: User identifier for memory management

|

| 343 |

+

session_id: Session identifier for memory management

|

| 344 |

+

|

| 345 |

+

Yields:

|

| 346 |

+

Dict containing node execution updates

|

| 347 |

+

"""

|

| 348 |

+

initial_state = {

|

| 349 |

+

"user_id": user_id,

|

| 350 |

+

"session_id": session_id,

|

| 351 |

+

"query": query,

|

| 352 |

+

"response": "",

|

| 353 |

+

"current_step": "start",

|

| 354 |

+

"agent_decision": "",

|

| 355 |

+

"deployment_approved": False,

|

| 356 |

+

"model_name": "",

|

| 357 |

+

"model_card": {},

|

| 358 |

+

"model_info": {},

|

| 359 |

+

"capacity_estimate": {},

|

| 360 |

+

"deployment_result": {},

|

| 361 |

+

"react_results": {},

|

| 362 |

+

"tool_calls": [],

|

| 363 |

+

"tool_results": [],

|

| 364 |

+

"messages": [],

|

| 365 |

+

# Approval fields for ReactWorkflow

|

| 366 |

+

"pending_tool_calls": [],

|

| 367 |

+

"approved_tool_calls": [],

|

| 368 |

+

"rejected_tool_calls": [],

|

| 369 |

+

"modified_tool_calls": [],

|

| 370 |

+

"needs_re_reasoning": False,

|

| 371 |

+

"re_reasoning_feedback": ""

|

| 372 |

+

}

|

| 373 |

+

|

| 374 |

+

# Create config with thread_id for checkpointer

|

| 375 |

+

config = {

|

| 376 |

+

"configurable": {

|

| 377 |

+

"thread_id": f"{user_id}_{session_id}"

|

| 378 |

+

}

|

| 379 |

+

}

|

| 380 |

+

|

| 381 |

+

try:

|

| 382 |

+

# Stream through the graph execution

|

| 383 |

+

async for chunk in self.graph.astream(initial_state, config):

|

| 384 |

+

# Each chunk contains the node name and its output

|

| 385 |

+

for node_name, node_output in chunk.items():

|

| 386 |

+

yield {

|

| 387 |

+

"node": node_name,

|

| 388 |

+

"output": node_output,

|

| 389 |

+

**node_output # Include all state updates

|

| 390 |

+

}

|

| 391 |

+

|

| 392 |

+

except Exception as e:

|

| 393 |

+

logger.error(f"Error in graph streaming: {e}")

|

| 394 |

+

yield {

|

| 395 |

+

"error": str(e),

|

| 396 |

+

"status": "error",

|

| 397 |

+

"error_step": initial_state.get("current_step", "unknown")

|

| 398 |

+

}

|

| 399 |

+

|

| 400 |

+

def draw_graph(self, output_file_path: str = "basic_agent_graph.png"):

|

| 401 |

+

"""

|

| 402 |

+

Generate and save a visual representation of the Basic Agent workflow graph.

|

| 403 |

+

|

| 404 |

+

Args:

|

| 405 |

+

output_file_path: Path where to save the graph PNG file

|

| 406 |

+

"""

|

| 407 |

+

try:

|

| 408 |

+

self.graph.get_graph().draw_mermaid_png(output_file_path=output_file_path)

|

| 409 |

+

logger.info(f"✅ Basic Agent graph visualization saved to: {output_file_path}")

|

| 410 |

+

except Exception as e:

|

| 411 |

+

logger.error(f"❌ Failed to generate Basic Agent graph visualization: {e}")

|

ComputeAgent/graph/graph_ReAct.py

ADDED

|

@@ -0,0 +1,331 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

"""

|

| 3 |

+

HiveGPT Agent ReAct Graph Module

|

| 4 |

+

|

| 5 |

+

This module implements the ReAct workflow for the HiveGPT Agent system.

|

| 6 |

+

It orchestrates agent reasoning, human approval, tool execution, and response refinement

|

| 7 |

+

using LangGraph for workflow management and memory support.

|

| 8 |

+

|

| 9 |

+

Key Features:

|

| 10 |

+

- Human-in-the-loop approval for tool execution

|

| 11 |

+

- MCP tool integration

|

| 12 |

+

- Memory-enabled state management

|

| 13 |

+

- Modular node functions for extensibility

|

| 14 |

+

|

| 15 |

+

Author: HiveNetCode

|

| 16 |

+

License: Private

|

| 17 |

+

"""

|

| 18 |

+

|

| 19 |

+

from typing import Sequence, Dict, Any

|

| 20 |

+

from langchain_core.tools import BaseTool

|

| 21 |

+

from langchain_core.messages import HumanMessage

|

| 22 |

+

from langgraph.graph import StateGraph, END

|

| 23 |

+

import logging

|

| 24 |

+

from typing_extensions import TypedDict

|

| 25 |

+

from typing import Dict, Any, Sequence, List

|

| 26 |

+

from langchain_core.messages import BaseMessage

|

| 27 |

+

from langchain_core.tools import BaseTool

|

| 28 |

+

from langchain_openai.chat_models import ChatOpenAI

|

| 29 |

+

from graph.state import AgentState

|

| 30 |

+

|

| 31 |

+

from nodes.ReAct import (

|

| 32 |

+

agent_reasoning_node,

|

| 33 |

+

human_approval_node,

|

| 34 |

+

auto_approval_node,

|

| 35 |

+

tool_execution_node,

|

| 36 |

+

generate_node,

|

| 37 |

+

tool_rejection_exit_node,

|

| 38 |

+

should_continue_to_approval,

|

| 39 |

+

should_continue_after_approval,

|

| 40 |

+

should_continue_after_execution

|

| 41 |

+

)

|

| 42 |

+

logger = logging.getLogger("ReAct Workflow")

|

| 43 |

+

|

| 44 |

+

# Global registries (to avoid serialization issues with checkpointer)

|

| 45 |

+

# Nodes access tools and LLM from here instead of storing them in state

|

| 46 |

+

_TOOLS_REGISTRY = {}

|

| 47 |

+

_LLM_REGISTRY = {}

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

# State class for ReAct workflow

|

| 51 |

+

class ReactState(AgentState):

|

| 52 |

+

"""

|

| 53 |

+

ReactState extends HiveGPTMemoryState to support ReAct workflow fields.

|

| 54 |

+

"""

|

| 55 |

+

pass

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

# Main workflow class for ReAct

|

| 59 |

+

class ReactWorkflow:

|

| 60 |

+

"""

|

| 61 |

+

Orchestrates the ReAct workflow:

|

| 62 |

+

1. Agent reasoning and tool selection

|

| 63 |

+

2. Human approval for tool execution

|

| 64 |

+

3. Tool execution (special handling for researcher tool)

|

| 65 |

+

4. Response refinement (skipped for researcher tool)

|

| 66 |

+

|

| 67 |

+

Features:

|

| 68 |

+

- MCP tool integration

|

| 69 |

+

- Human-in-the-loop approval for all tool calls

|

| 70 |

+

- Special handling for researcher tool (bypasses refinement, uses generate_node)

|

| 71 |

+

- Memory management with conversation summaries and recent message context

|

| 72 |

+

- Proper state management following AgenticRAG pattern

|

| 73 |

+

"""

|

| 74 |

+

def __init__(self, llm, tools: Sequence[BaseTool]):

|

| 75 |

+

"""

|

| 76 |

+

Initialize ReAct workflow with LLMs, tools, and optional memory checkpointer.

|

| 77 |

+

|

| 78 |

+

Args:

|

| 79 |

+

llm: Main LLM for reasoning (will be bound with tools)

|

| 80 |

+

refining_llm: LLM for response refinement

|

| 81 |

+

tools: Sequence of MCP tools for execution

|

| 82 |

+

checkpointer: Optional memory checkpointer for conversation memory

|

| 83 |

+

"""

|

| 84 |

+

self.llm = llm.bind_tools(tools)

|

| 85 |

+