Commit

·

676df31

verified

·

0

Parent(s):

Duplicate from OpenGVLab/InternVL3_5-GPT-OSS-20B-A4B-Preview

Browse filesCo-authored-by: Weiyun Wang <[email protected]>

- .gitattributes +38 -0

- README.md +1486 -0

- chat_template.jinja +397 -0

- config.json +124 -0

- configuration_intern_vit.py +119 -0

- configuration_internvl_chat.py +118 -0

- conversation.py +416 -0

- examples/image1.jpg +0 -0

- examples/image2.jpg +3 -0

- examples/red-panda.mp4 +3 -0

- generation_config.json +4 -0

- model-00001-of-00009.safetensors +3 -0

- model-00002-of-00009.safetensors +3 -0

- model-00003-of-00009.safetensors +3 -0

- model-00004-of-00009.safetensors +3 -0

- model-00005-of-00009.safetensors +3 -0

- model-00006-of-00009.safetensors +3 -0

- model-00007-of-00009.safetensors +3 -0

- model-00008-of-00009.safetensors +3 -0

- model-00009-of-00009.safetensors +3 -0

- model.safetensors.index.json +765 -0

- modeling_intern_vit.py +433 -0

- modeling_internvl_chat.py +399 -0

- preprocessor_config.json +34 -0

- processor_config.json +4 -0

- special_tokens_map.json +23 -0

- tokenizer.json +3 -0

- tokenizer_config.json +256 -0

- video_preprocessor_config.json +70 -0

.gitattributes

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

tokenizer.json filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

examples/image2.jpg filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

examples/red-panda.mp4 filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,1486 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

pipeline_tag: image-text-to-text

|

| 4 |

+

library_name: transformers

|

| 5 |

+

base_model:

|

| 6 |

+

- OpenGVLab/InternViT-300M-448px-V2_5

|

| 7 |

+

- openai/gpt-oss-20b

|

| 8 |

+

base_model_relation: merge

|

| 9 |

+

datasets:

|

| 10 |

+

- OpenGVLab/MMPR-v1.2

|

| 11 |

+

- OpenGVLab/MMPR-Tiny

|

| 12 |

+

language:

|

| 13 |

+

- multilingual

|

| 14 |

+

tags:

|

| 15 |

+

- internvl

|

| 16 |

+

- custom_code

|

| 17 |

+

---

|

| 18 |

+

|

| 19 |

+

# InternVL3_5-GPT-OSS-20B-A4B-Preview

|

| 20 |

+

|

| 21 |

+

[\[📂 GitHub\]](https://github.com/OpenGVLab/InternVL) [\[📜 InternVL 1.0\]](https://huggingface.co/papers/2312.14238) [\[📜 InternVL 1.5\]](https://huggingface.co/papers/2404.16821) [\[📜 InternVL 2.5\]](https://huggingface.co/papers/2412.05271) [\[📜 InternVL2.5-MPO\]](https://huggingface.co/papers/2411.10442) [\[📜 InternVL3\]](https://huggingface.co/papers/2504.10479) [\[📜 InternVL3.5\]](https://huggingface.co/papers/2508.18265)

|

| 22 |

+

|

| 23 |

+

[\[🆕 Blog\]](https://internvl.github.io/blog/) [\[🗨️ Chat Demo\]](https://chat.intern-ai.org.cn/) [\[🚀 Quick Start\]](#quick-start) [\[📖 Documents\]](https://internvl.readthedocs.io/en/latest/)

|

| 24 |

+

|

| 25 |

+

<div align="center">

|

| 26 |

+

<img width="500" alt="image" src="https://cdn-uploads.huggingface.co/production/uploads/64006c09330a45b03605bba3/zJsd2hqd3EevgXo6fNgC-.png">

|

| 27 |

+

</div>

|

| 28 |

+

|

| 29 |

+

## Introduction

|

| 30 |

+

|

| 31 |

+

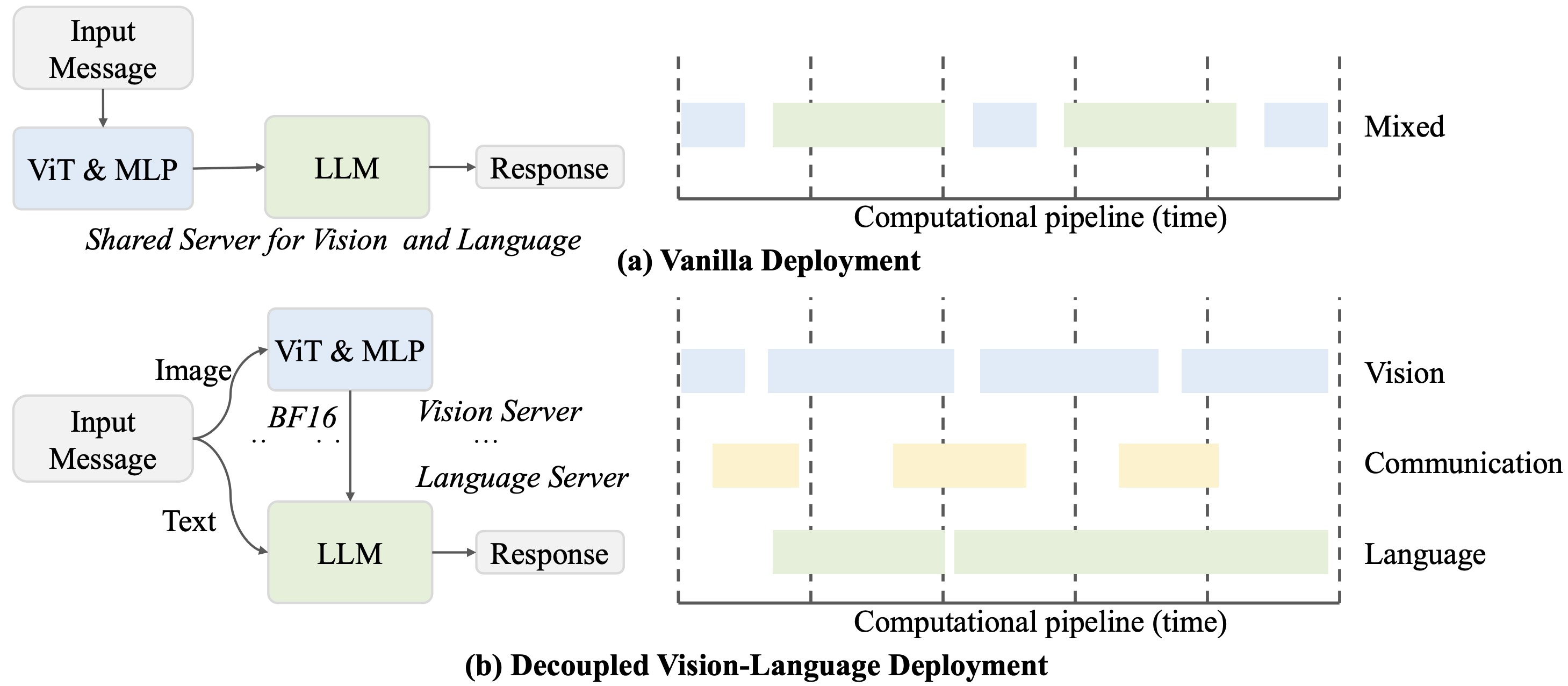

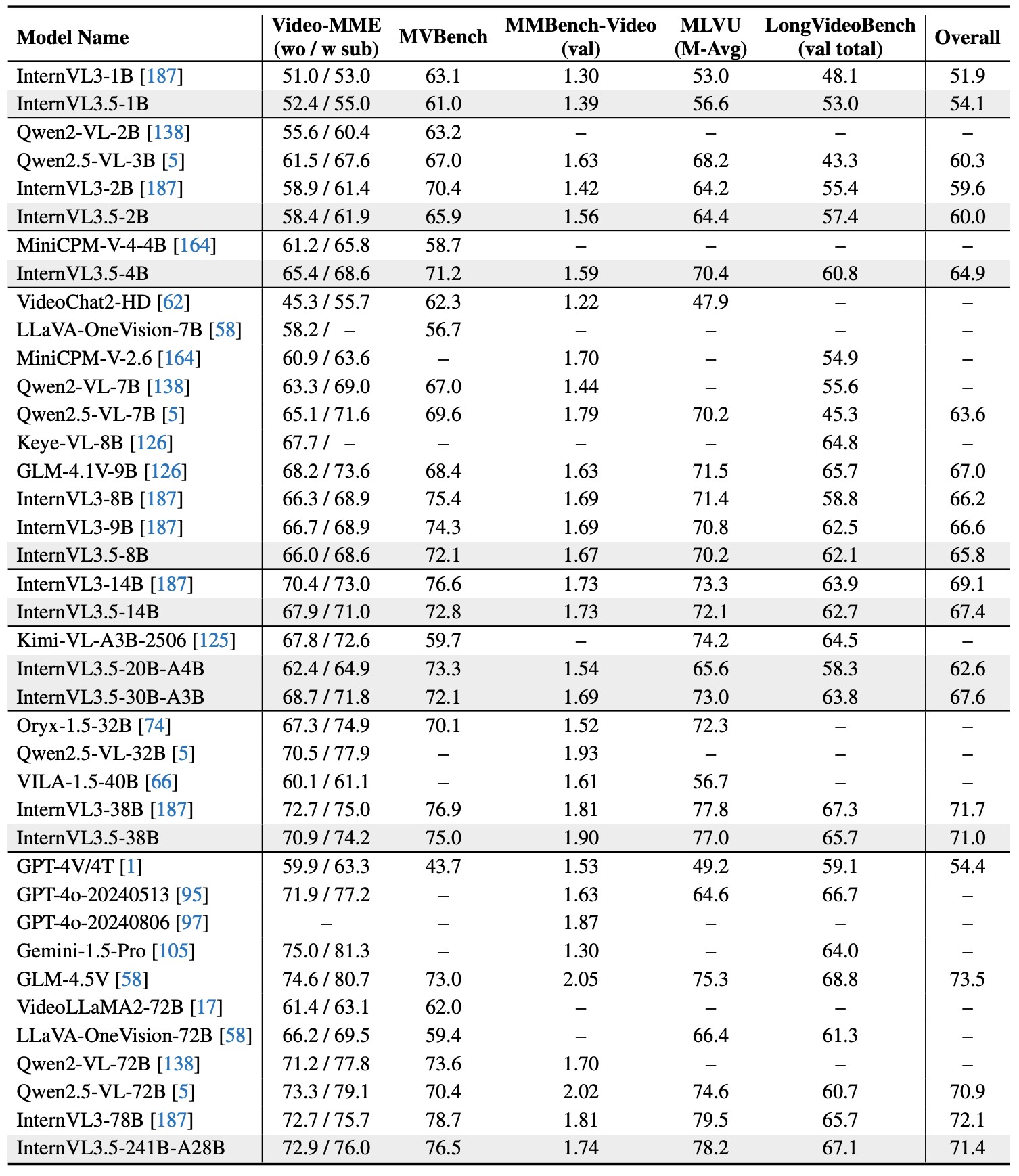

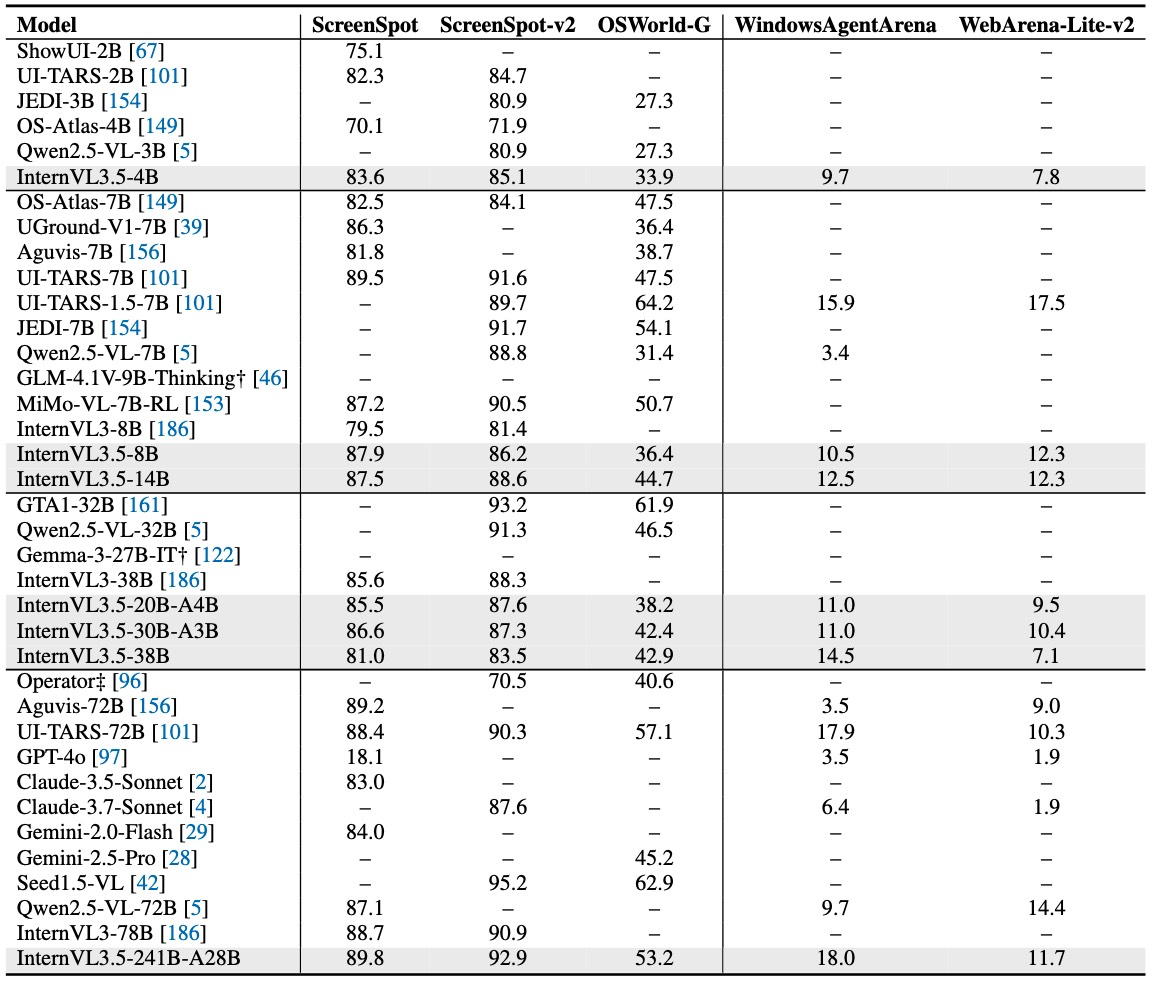

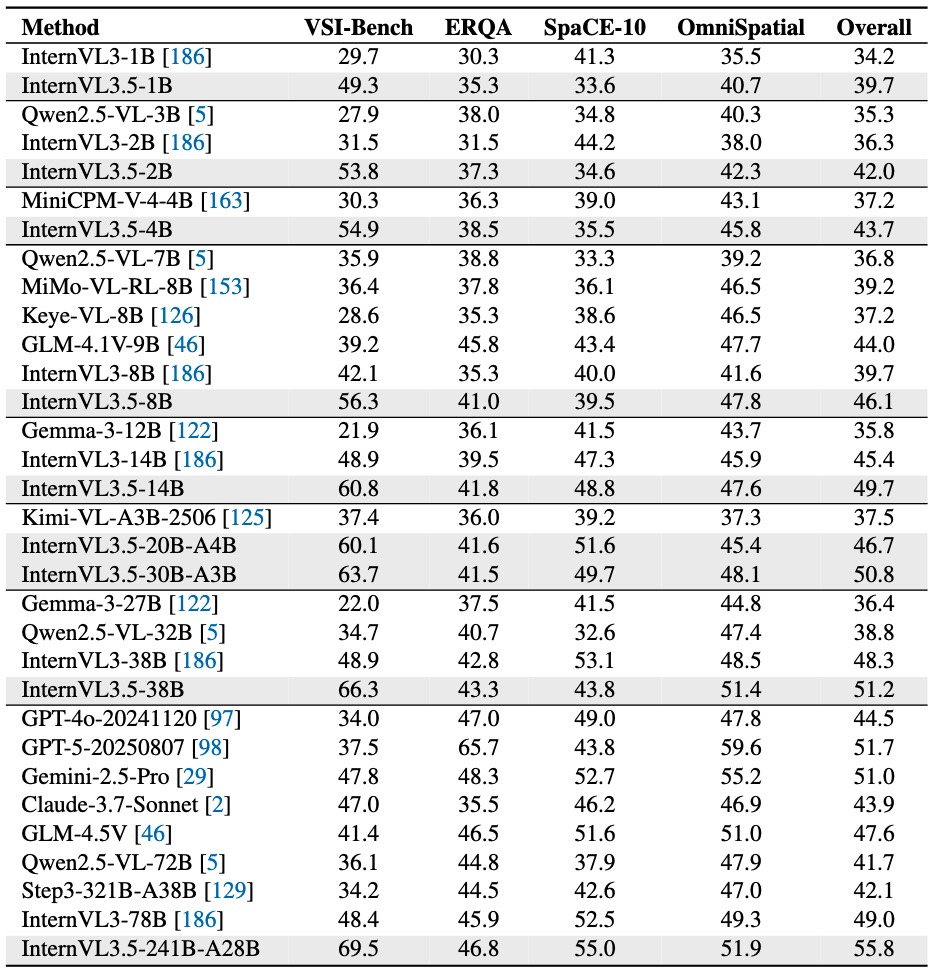

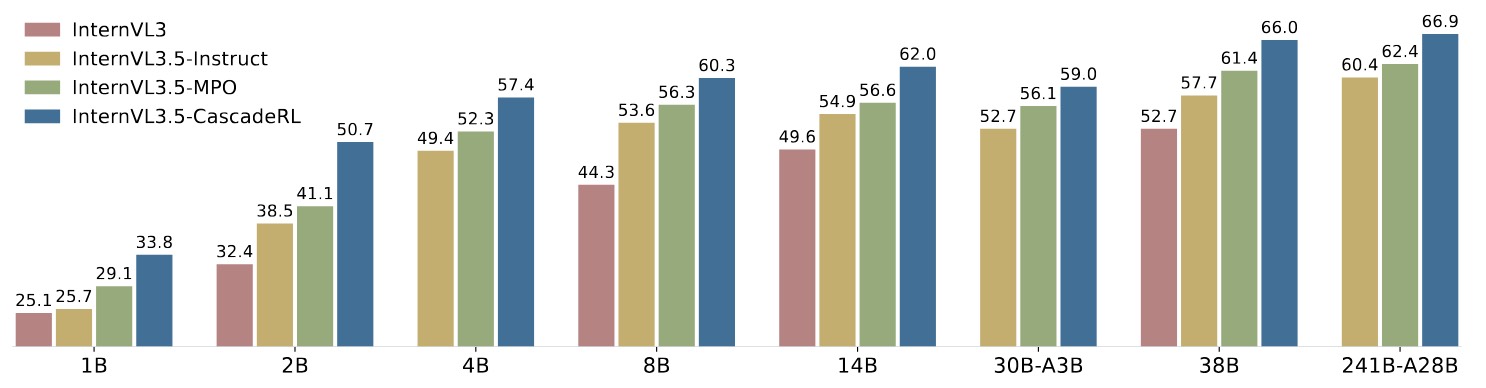

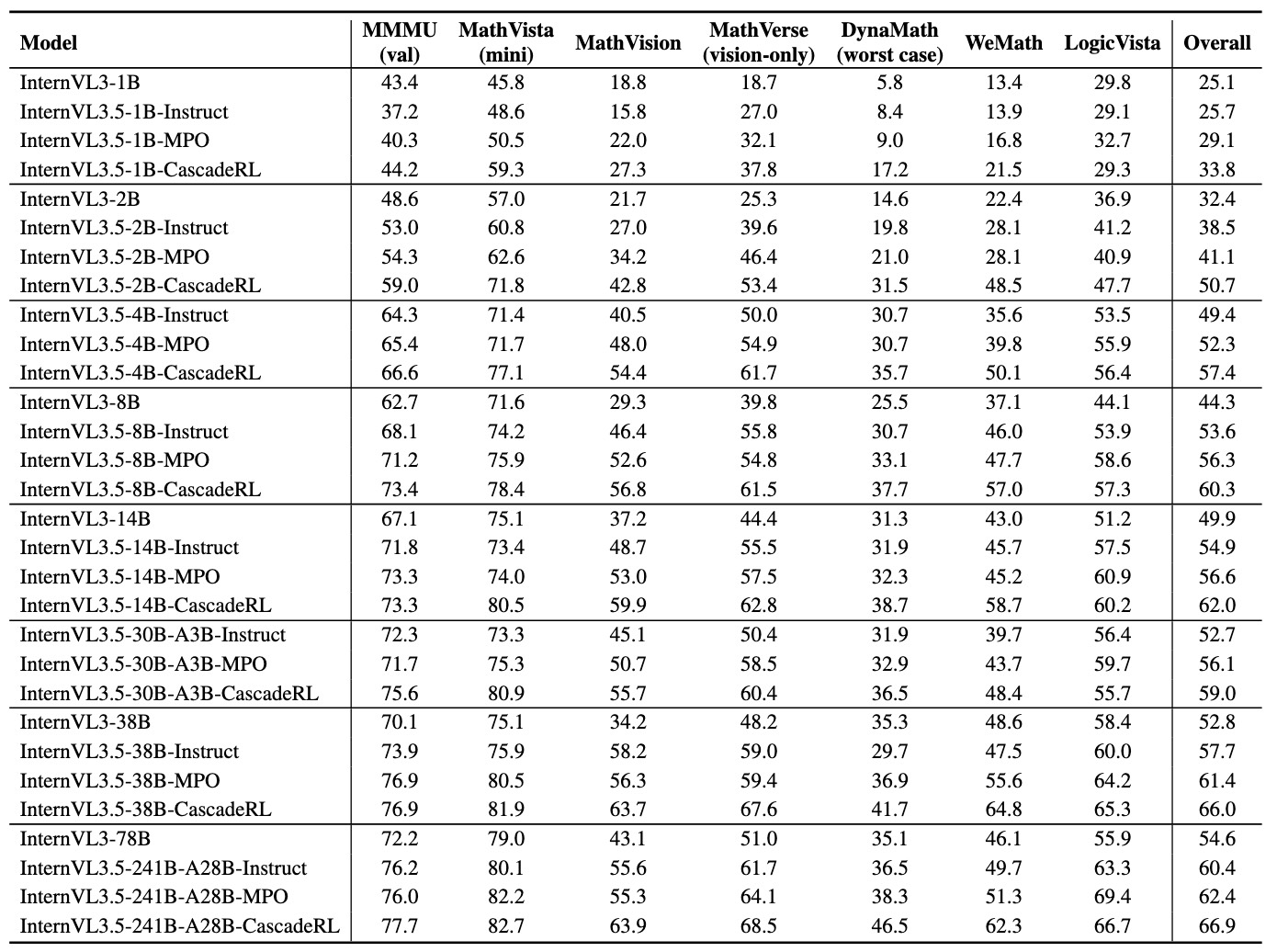

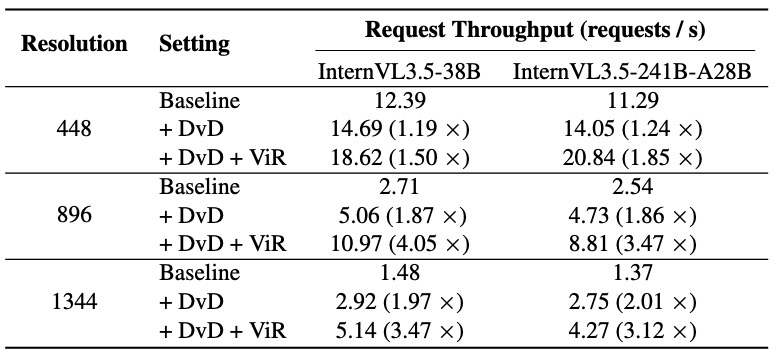

We introduce *InternVL3.5*, a new family of open-source multimodal models that significantly advances versatility, reasoning capability, and inference efficiency along the InternVL series. A key innovation is the *Cascade Reinforcement Learning (Cascade RL)* framework, which enhances reasoning through a two-stage process: offline RL for stable convergence and online RL for refined alignment. This coarse-to-fine training strategy leads to substantial improvements on downstream reasoning tasks, e.g., MMMU and MathVista. To optimize efficiency, we propose a *Visual Resolution Router (ViR)* that dynamically adjusts the resolution of visual tokens without compromising performance. Coupled with ViR, our Decoupled *Vision-Language Deployment (DvD)* strategy separates the vision encoder and language model across different GPUs, effectively balancing computational load. These contributions collectively enable InternVL3.5 to achieve up to a +16.0\% gain in overall reasoning performance and a 4.05 \\(\times\\) inference speedup compared to its predecessor, i.e., InternVL3. In addition, InternVL3.5 supports novel capabilities such as GUI interaction and embodied agency. Notably, our largest model, i.e., InternVL3.5-241B-A28B, attains state-of-the-art results among open-source MLLMs across general multimodal, reasoning, text, and agentic tasks—narrowing the performance gap with leading commercial models like GPT-5. All models and code are publicly released.

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

|

| 35 |

+

> Hatched bars represent closed-source commercial models. We report average scores on a set of multimodal general, reasoning, text, and agentic benchmarks: MMBench v1.1 (en), MMStar,BLINK, HallusionBench, AI2D, OCRBench, MMVet, MME-RealWorld (en), MVBench, VideoMME, MMMU, MathVista, MathVision, MathVerse, DynaMath, WeMath, LogicVista, MATH500, AIME24, AIME25, GPQA, MMLU-Pro, GAOKAO, IFEval, SGP-Bench, VSI-Bench, ERQA, SpaCE-10, and OmniSpatial.

|

| 36 |

+

|

| 37 |

+

See [quick start](#quick-start) for how to use our model.

|

| 38 |

+

|

| 39 |

+

## InternVL3.5 Family

|

| 40 |

+

|

| 41 |

+

In the following table, we provide an overview of the InternVL3.5 series.

|

| 42 |

+

To maintain consistency with earlier generations, we provide two model formats: [the GitHub format](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B), consistent with prior releases, and [the HF format](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B-HF), aligned with the official Transformers standard.

|

| 43 |

+

|

| 44 |

+

> If you want to convert the checkpoint between these two formats, please refer to the scripts about [custom2hf](https://github.com/OpenGVLab/InternVL/blob/main/internvl_chat/tools/internvl_custom2hf.py) and [hf2custom](https://github.com/OpenGVLab/InternVL/blob/main/internvl_chat/tools/internvl_hf2custom.py).

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

### Github Format

|

| 48 |

+

|

| 49 |

+

|

| 50 |

+

| Model | #Vision Param | #Language Param | #Total Param | HF Link | ModelScope Link |

|

| 51 |

+

| --------------------- | ------------- | --------------- | ------------ | ------------------------------------------------------------------------------ | ---------------------------------------------------------------------------------------- |

|

| 52 |

+

| InternVL3.5-1B | 0.3B | 0.8B | 1.1B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-1B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-1B) |

|

| 53 |

+

| InternVL3.5-2B | 0.3B | 2.0B | 2.3B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-2B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-2B) |

|

| 54 |

+

| InternVL3.5-4B | 0.3B | 4.4B | 4.7B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-4B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-4B) |

|

| 55 |

+

| InternVL3.5-8B | 0.3B | 8.2B | 8.5B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-8B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-8B) |

|

| 56 |

+

| InternVL3.5-14B | 0.3B | 14.8B | 15.1B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-14B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-14B) |

|

| 57 |

+

| InternVL3.5-38B | 5.5B | 32.8B | 38.4B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-38B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-38B) |

|

| 58 |

+

| InternVL3.5-20B-A4B | 0.3B | 20.9B | 21.2B-A4B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-GPT-OSS-20B-A4B-Preview) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-GPT-OSS-20B-A4B-Preview) |

|

| 59 |

+

| InternVL3.5-30B-A3B | 0.3B | 30.5B | 30.8B-A3B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-30B-A3B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-30B-A3B) |

|

| 60 |

+

| InternVL3.5-241B-A28B | 5.5B | 235.1B | 240.7B-A28B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-241B-A28B) |

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

### HuggingFace Format

|

| 64 |

+

|

| 65 |

+

|

| 66 |

+

| Model | #Vision Param | #Language Param | #Total Param | HF Link | ModelScope Link |

|

| 67 |

+

| ------------------------ | ------------- | --------------- | ------------ | --------------------------------------------------------------------------------- | ------------------------------------------------------------------------------------------- |

|

| 68 |

+

| InternVL3.5-1B-HF | 0.3B | 0.8B | 1.1B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-1B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-1B-HF) |

|

| 69 |

+

| InternVL3.5-2B-HF | 0.3B | 2.0B | 2.3B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-2B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-2B-HF) |

|

| 70 |

+

| InternVL3.5-4B-HF | 0.3B | 4.4B | 4.7B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-4B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-4B-HF) |

|

| 71 |

+

| InternVL3.5-8B-HF | 0.3B | 8.2B | 8.5B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-8B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-8B-HF) |

|

| 72 |

+

| InternVL3.5-14B-HF | 0.3B | 14.8B | 15.1B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-14B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-14B-HF) |

|

| 73 |

+

| InternVL3.5-38B-HF | 5.5B | 32.8B | 38.4B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-38B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-38B-HF) |

|

| 74 |

+

| InternVL3.5-20B-A4B-HF | 0.3B | 20.9B | 21.2B-A4B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-GPT-OSS-20B-A4B-Preview-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-GPT-OSS-20B-A4B-Preview-HF) |

|

| 75 |

+

| InternVL3.5-30B-A3B-HF | 0.3B | 30.5B | 30.8B-A3B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-30B-A3B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-30B-A3B-HF) |

|

| 76 |

+

| InternVL3.5-241B-A28B-HF | 5.5B | 235.1B | 240.7B-A28B | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B-HF) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-241B-A28B-HF) |

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

> We conduct the evaluation with [VLMEvalkit](https://github.com/open-compass/VLMEvalKit). ***To enable the Thinking mode of our model, please set the system prompt to [R1_SYSTEM_PROMPT](https://github.com/open-compass/VLMEvalKit/blob/main/vlmeval/vlm/internvl/internvl_chat.py#L38).*** When enabling Thinking mode, we recommend setting `do_sample=True` and `temperature=0.6` to mitigate undesired repetition.

|

| 82 |

+

|

| 83 |

+

Our training pipeline comprises four stages: Multimodal Continual Pre-Training (**CPT**), Supervised Fine-Tuning (**SFT**), and Cascade Reinforcement Learning (**CascadeRL**). In CascadeRL, we first fine-tune the model using Mixed Preference Optimization (**MPO**) under an offline RL setting, followed by **GSPO** under an oneline RL setting.

|

| 84 |

+

For the Flash version of InternVL3.5, we additionally introduce a lightweight training stage, termed Visual Consistency Learning (**ViCO**), which reduces the token cost required to represent an image patch.

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

|

| 88 |

+

Here, we also open-source the model weights after different training stages for potential research usage.

|

| 89 |

+

***If you're unsure which version to use, please select the one without any suffix, as it has completed the full training pipeline.***

|

| 90 |

+

|

| 91 |

+

|

| 92 |

+

| Model | Training Pipeline | HF Link | ModelScope Link |

|

| 93 |

+

| -------------------------------- | --------------------- | --------------------------------------------------------------------------- | ------------------------------------------------------------------------------------- |

|

| 94 |

+

| InternVL3.5-1B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-1B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-1B-Pretrained) |

|

| 95 |

+

| InternVL3.5-1B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-1B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-1B-Instruct) |

|

| 96 |

+

| InternVL3.5-1B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-1B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-1B-MPO) |

|

| 97 |

+

| InternVL3.5-1B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-1B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-1B) |

|

| 98 |

+

| InternVL3.5-2B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-2B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-2B-Pretrained) |

|

| 99 |

+

| InternVL3.5-2B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-2B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-2B-Instruct) |

|

| 100 |

+

| InternVL3.5-2B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-2B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-2B-MPO) |

|

| 101 |

+

| InternVL3.5-2B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-2B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-2B) |

|

| 102 |

+

| InternVL3.5-4B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-4B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-4B-Pretrained) |

|

| 103 |

+

| InternVL3.5-4B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-4B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-4B-Instruct) |

|

| 104 |

+

| InternVL3.5-4B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-4B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-4B-MPO) |

|

| 105 |

+

| InternVL3.5-4B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-4B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-4B) |

|

| 106 |

+

| InternVL3.5-8B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-8B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-8B-Pretrained) |

|

| 107 |

+

| InternVL3.5-8B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-8B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-8B-Instruct) |

|

| 108 |

+

| InternVL3.5-8B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-8B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-8B-MPO) |

|

| 109 |

+

| InternVL3.5-8B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-8B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-8B) |

|

| 110 |

+

| InternVL3.5-14B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-14B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-14B-Pretrained) |

|

| 111 |

+

| InternVL3.5-14B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-14B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-14B-Instruct) |

|

| 112 |

+

| InternVL3.5-14B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-14B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-14B-MPO) |

|

| 113 |

+

| InternVL3.5-14B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-14B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-14B) |

|

| 114 |

+

| InternVL3.5-30B-A3B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-30B-A3B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-30B-A3B-Pretrained) |

|

| 115 |

+

| InternVL3.5-30B-A3B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-30B-A3B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-30B-A3B-Instruct) |

|

| 116 |

+

| InternVL3.5-30B-A3B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-30B-A3B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-30B-A3B-MPO) |

|

| 117 |

+

| InternVL3.5-30B-A3B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-30B-A3B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-30B-A3B) |

|

| 118 |

+

| InternVL3.5-38B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-38B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-38B-Pretrained) |

|

| 119 |

+

| InternVL3.5-38B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-38B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-38B-Instruct) |

|

| 120 |

+

| InternVL3.5-38B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-38B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-38B-MPO) |

|

| 121 |

+

| InternVL3.5-38B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-38B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-38B) |

|

| 122 |

+

| InternVL3.5-241B-A28B-Pretrained | CPT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B-Pretrained) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-241B-A28B-Pretrained) |

|

| 123 |

+

| InternVL3.5-241B-A28B-Instruct | CPT + SFT | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B-Instruct) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-241B-A28B-Instruct) |

|

| 124 |

+

| InternVL3.5-241B-A28B-MPO | CPT + SFT + MPO | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B-MPO) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-241B-A28B-MPO) |

|

| 125 |

+

| InternVL3.5-241B-A28B | CPT + SFT + CascadeRL | [🤗 link](https://huggingface.co/OpenGVLab/InternVL3_5-241B-A28B) | [🤖 link](https://www.modelscope.cn/models/OpenGVLab/InternVL3_5-241B-A28B) |

|

| 126 |

+

|

| 127 |

+

|

| 128 |

+

The Flash version of our model will be released as soon as possible.

|

| 129 |

+

|

| 130 |

+

|

| 131 |

+

|

| 132 |

+

## Model Architecture

|

| 133 |

+

|

| 134 |

+

`InternVL3.5`:

|

| 135 |

+

This series of models follow the "ViT–MLP–LLM" paradigm adopted in previous versions of InternVL.

|

| 136 |

+

We initialize the language model using the Qwen3 series and GPT-OSS, and the vision encoder using InternViT-300M and InternViT-6B.

|

| 137 |

+

The Dynamic High Resolution strategy introduced in InternVL1.5 is also retained in our design.

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

`InternVL3.5-Flash`:

|

| 141 |

+

Compared to InternVL3.5, InternVL3.5-Flash further integrates the *Visual Resolution Router (ViR)*, thus yielding a series of efficient variants friendly suitable for resource-constrained scenarios.

|

| 142 |

+

Specifically, in InternVL3.5, each image patch is initially represented as 1024 visual tokens for the vision encoder, which are then compressed into 256 tokens via a pixel shuffle module before being passed to the Large Language Model (LLM).

|

| 143 |

+

In InternVL3.5-Flash, as shown in the Figure below, an additional pixel shuffle module with a higher compression rate is included, enabling the compression of visual tokens down to 64 tokens.

|

| 144 |

+

For each patch, the patch router determines the appropriate compression rate by assessing its semantic richness, and routes it to the corresponding pixel shuffle module accordingly.

|

| 145 |

+

Benefiting from this patch-aware compression mechanism, InternVL3.5-Flash is able to reduce the number of visual tokens by 50\% while maintaining nearly 100\% of the performance of InternVL3.5.

|

| 146 |

+

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

|

| 150 |

+

## Training and Deployment Strategy

|

| 151 |

+

|

| 152 |

+

### Pre-Training

|

| 153 |

+

|

| 154 |

+

During the pre-training stage, we update all model parameters jointly using the combination of large-scale text and multimodal corpora. Specifically, given an arbitrary training sample consisting of a multimodal token sequence \\(\mathbf{x}=\left(x_1, x_2, \ldots, x_L\right)\\), the next token prediction (NTP) loss is calculated on each text token as follows:

|

| 155 |

+

|

| 156 |

+

$$

|

| 157 |

+

\mathcal{L}_{i}=-\log p_\theta\left(x_i \mid x_1, \ldots, x_{i-1}\right),

|

| 158 |

+

$$

|

| 159 |

+

|

| 160 |

+

where \\(x_i\\) is the predicted token and prefix tokens in \\(\{x_1, x_2, \ldots, x_{i-1}\}\\) can be either text tokens or image tokens. Notably, for conversation samples, only response tokens are included for the calculation of the loss.

|

| 161 |

+

Additionally, to mitigate bias toward either longer or shorter responses during training, we adopt the square averaging to re-weight the NTP loss as follows:

|

| 162 |

+

|

| 163 |

+

$$

|

| 164 |

+

\mathcal{L}_{i}^{'} = \frac{w_i}{\sum_j w_j} \cdot \mathcal{L}_i, \quad w_i = \frac{1}{N^{0.5}},

|

| 165 |

+

$$

|

| 166 |

+

|

| 167 |

+

where \\(N\\) denotes the number of tokens in the training sample on which the loss needs to be calculated. The random JPEG compression is also included to enhance the model's real-world performance.

|

| 168 |

+

|

| 169 |

+

### Supervised Fine-Tuning

|

| 170 |

+

|

| 171 |

+

During the SFT phase, we adopt the same objective as in the pre-training stage and use the square-root averaging strategy to calculate the final loss. In this stage, the context window is set to 32K tokens to adapt long-context information.

|

| 172 |

+

Compared to InternVL3, the SFT stage of InternVL3.5 contains more high-quality and diverse training data derived from three sources:

|

| 173 |

+

|

| 174 |

+

(1) Instruction-following data from InternVL3, which are reused to preserve broad coverage of vision–language tasks.

|

| 175 |

+

|

| 176 |

+

(2) Multimodal reasoning data in the "Thinking" mode, which are included to instill long-thinking capabilities in the model. To construct such data, we first use InternVL3-78B to describe the image and then input the description into DeepSeek-R1 to sample rollouts with detailed reasoning processes. Rollouts with an incorrect final answer are filtered out. The questions in these datasets cover various expert domains, such as mathematics and scientific disciplines, thereby strengthening performance on different reasoning tasks.

|

| 177 |

+

|

| 178 |

+

(3) Capability-expansion datasets, which endow InternVL3.5 with new skills, including GUI-based interaction, embodied interaction, and scalable vect

|

| 179 |

+

|

| 180 |

+

### Cascade Reinforcement Learning

|

| 181 |

+

|

| 182 |

+

Cascade RL aims to combine the benefits of offline RL and online RL to progressively facilitate the post-training of MLLMs in an efficient manner.

|

| 183 |

+

Specifically, we first fine-tune the model using an offline RL algorithm as an efficient warm-up stage to reach a satisfied results, which can guarantee the high-quality rollouts for the latter stage.

|

| 184 |

+

Subsequently, we employ an online RL algorithm to further refine the output distribution based on rollouts generated by the model itself. Compared to the single offline or online RL stage, our cascaded RL achieves significant performance improvements at a fraction of the GPU time cost.

|

| 185 |

+

|

| 186 |

+

|

| 187 |

+

|

| 188 |

+

During the offline RL stage, we employ mixed preference optimization (MPO) to fine-tune the model. Specifically, the training objective of MPO is a combination of preference loss \\(\mathcal{L}_{p}\\), quality loss \\(\mathcal{L}_{q}\\), and generation loss \\(\mathcal{L}_{g}\\), which can be formulated as follows:

|

| 189 |

+

|

| 190 |

+

$$

|

| 191 |

+

\mathcal{L}_{\text{MPO}}=

|

| 192 |

+

w_{p} \mathcal{L}_{p}

|

| 193 |

+

+

|

| 194 |

+

w_{q} \mathcal{L}_{q}

|

| 195 |

+

+

|

| 196 |

+

w_{g} \mathcal{L}_{g}

|

| 197 |

+

,

|

| 198 |

+

$$

|

| 199 |

+

|

| 200 |

+

where \\(w_{*}\\) represents the weight assigned to each loss component.

|

| 201 |

+

The DPO loss, BCO loss, and LM loss serve as the preference loss, quality loss, and generation loss, respectively.

|

| 202 |

+

|

| 203 |

+

|

| 204 |

+

During the online RL stage, we employ GSPO, without reference model constraints, as our online RL algorithm, which we find more effective in training both dense and mixture-of-experts (MoE) models. Similar to GRPO, the advantage is defined as the normalized reward across responses sampled from the same query.

|

| 205 |

+

The training objective of GSPO is given by:

|

| 206 |

+

|

| 207 |

+

$$

|

| 208 |

+

\mathcal{L}_{\mathrm{GSPO}}(\theta)=\mathbb{E}_{x \sim \mathcal{D},\left\{y_i\right\}_{i=1}^G \sim \pi_{\theta \text { old }}(\cdot \mid x)}\left[\frac{1}{G} \sum_{i=1}^G \min \left(s_i(\theta) \widehat{A}_i, \operatorname{clip}\left(s_i(\theta), 1-\varepsilon, 1+\varepsilon\right) \widehat{A}_i\right)\right],

|

| 209 |

+

$$

|

| 210 |

+

|

| 211 |

+

where the importance sampling ratio is defined as the geometric mean of the per-token ratios.

|

| 212 |

+

|

| 213 |

+

> Please see [our paper](https://huggingface.co/papers/2508.18265) for more technical and experimental details.

|

| 214 |

+

|

| 215 |

+

|

| 216 |

+

### Visual Consistency Learning

|

| 217 |

+

|

| 218 |

+

|

| 219 |

+

We further include ViCO as an additional training stage to integrate the *visual resolution router (ViR)* into InternVL3.5, thereby reducing the inference cost of InternVL3.5. The obtained efficient version of InternVL3.5 are termed as *InternVL3.5-Flash*. In particular, ViCO comprises two stages:

|

| 220 |

+

|

| 221 |

+

`Consistency training`:

|

| 222 |

+

In this stage, the entire model is trained to minimize the divergence between response distributions conditioned on visual tokens with different compression rates.

|

| 223 |

+

In practice, we introduce an extra reference model, which is frozen and initialized with InternVL3.5.

|

| 224 |

+

Given a sample, each image patch is represented as either 256 or 64 tokens, and the training objective is defined as follows:

|

| 225 |

+

|

| 226 |

+

|

| 227 |

+

$$

|

| 228 |

+

\mathcal{L}_\text{ViCO} =

|

| 229 |

+

\mathbb{E}_{\xi \sim \mathcal{R}} \Bigg[

|

| 230 |

+

\frac{1}{N} \sum_{i=1}^{N} \mathrm{KL} \Big(

|

| 231 |

+

\pi_{\theta_{ref}}\left(y_i \mid y_{<i}, I\right) \;\Big\|\;

|

| 232 |

+

\pi_{\theta_{policy}}\left(y_i \mid y_{<i}, I_\xi\right)

|

| 233 |

+

\Big)

|

| 234 |

+

\Bigg],

|

| 235 |

+

$$

|

| 236 |

+

|

| 237 |

+

where \\(\mathrm{KL}\) denotes the KL divergence and \(\xi\) denotes the compression rate, which is uniformly sampled from \(\{\frac{1}{4},\frac{1}{16}\}\). The image \(I_\xi\) is represented as 256 tokens when \(\xi=\frac{1}{4}\) and 64 tokens when \(\xi=\frac{1}{16}\). Notably, the reference model always performs inference with \(\xi=\frac{1}{4}\).

|

| 238 |

+

|

| 239 |

+

|

| 240 |

+

`Router training`:

|

| 241 |

+

This stage aims to train the ViR to select an appropriate trade-off resolution for different inputs.

|

| 242 |

+

ViR is formulated as a binary classifier and trained using standard cross-entropy loss.

|

| 243 |

+

To construct the route targets, we first compute the KL divergence between the model outputs conditioned on uncompressed visual tokens (i.e., 256 tokens per patch) and those conditioned on compressed visual tokens (i.e., 64 tokens per patch).

|

| 244 |

+

During this stage, the main MLLM (ViT, MLP and LLM) is kept frozen, and only the ViR is trained.

|

| 245 |

+

Specifically, we first compute the loss ratio for each patch:

|

| 246 |

+

|

| 247 |

+

$$

|

| 248 |

+

r_i = \frac{\mathcal{L}_\text{ViCO}\big(y_i \mid I_{\frac{1}{16}}\big)}{\mathcal{L}_\text{ViCO}\big(y_i \mid I_{\frac{1}{4}}\big)},

|

| 249 |

+

$$

|

| 250 |

+

|

| 251 |

+

which quantifies the relative increase in loss caused by compressing the visual tokens. Based on this ratio, the binary ground-truth label for the patch router is defined as:

|

| 252 |

+

|

| 253 |

+

$$

|

| 254 |

+

y_i^\text{router} =

|

| 255 |

+

\begin{cases}

|

| 256 |

+

0, & r_i < \tau \; \text{(compression has negligible impact)} \\

|

| 257 |

+

1, & r_i \ge \tau \; \text{(compression has significant impact)},

|

| 258 |

+

\end{cases}

|

| 259 |

+

$$

|

| 260 |

+

|

| 261 |

+

where \(y_i^{\text{router}}=0\) and \(y_i^{\text{router}}=1\) indicate that the compression rate \(\xi\) is set to \(\tfrac{1}{16}\) and \(\tfrac{1}{4}\), respectively.

|

| 262 |

+

|

| 263 |

+

> Please see [our paper](https://huggingface.co/papers/2508.18265) for more technical and experimental details.

|

| 264 |

+

|

| 265 |

+

|

| 266 |

+

### Test-Time Scaling

|

| 267 |

+