Qwen3-1.7B-FlashHead

Optimized version of Qwen3-1.7B using FlashHead, Embedl’s efficient replacement for the language model head, reducing size while preserving accuracy. Designed for low-latency inference on NVIDIA RTX GPUs, leveraging:

- FlashHead

- vLLM plugin via

flash-head

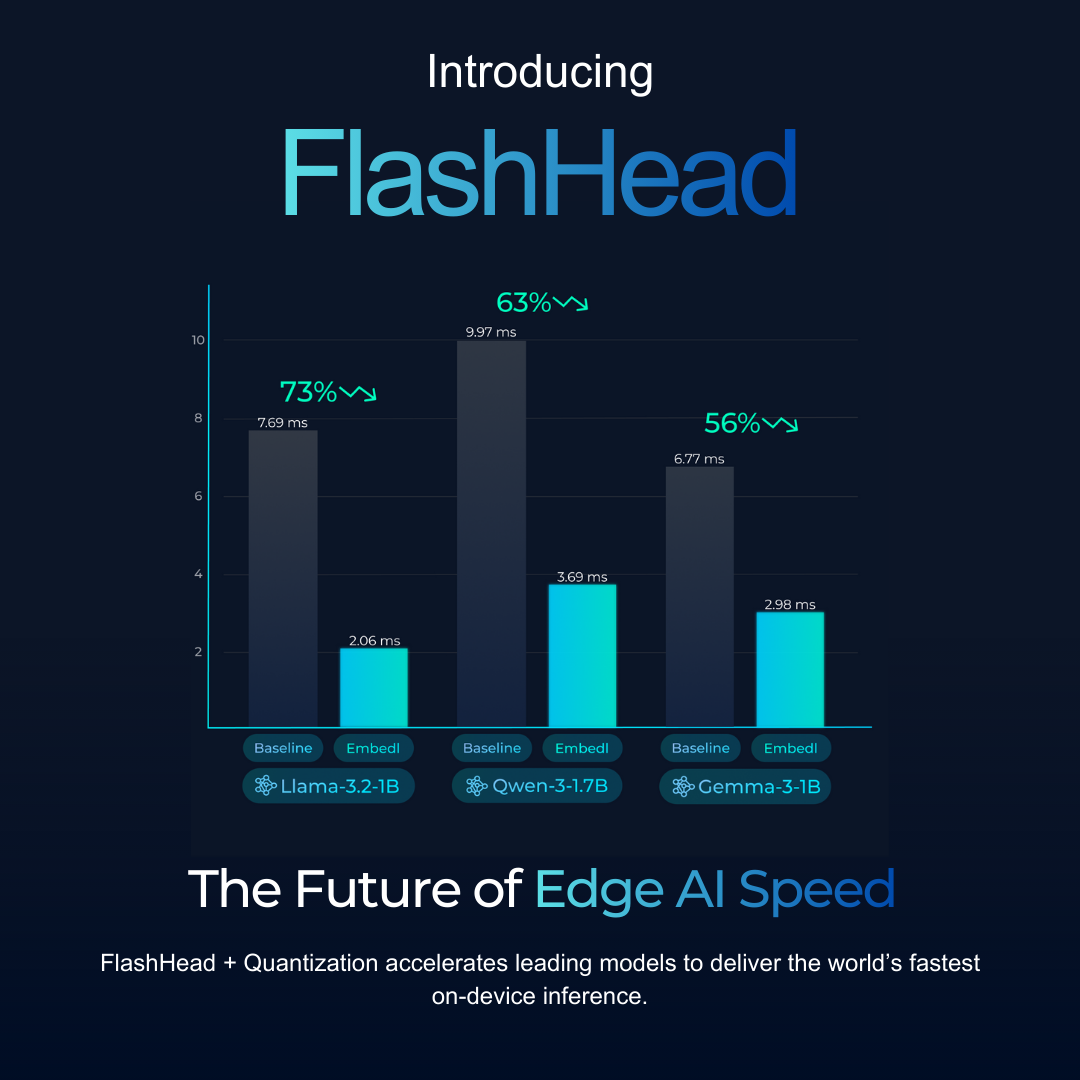

FlashHead matches the Qwen3-1.7B baseline within rounding error on common benchmarks (MMLU-Pro, HellaSwag, GSM8K, etc.) and, combined with quantization, delivers SOTA on-device latency.

Quickstart

pip install flash-head

vllm serve embedl/Qwen3-1.7B-FlashHead

Model Details

| Field | Value |

|---|---|

| Base Model | Qwen3-1.7B |

| Input / Output | Text → Text |

| Release Date | 2025-12-08 |

| Version | 1.0 |

| Optimizations | FlashHead LM Head |

| Developers | Embedl |

| Licenses | Upstream: Apache 2.0. Optimized components: Embedl Models Community Licence v1.0 (no redistribution) |

| Intended Use | Text generation, reasoning, assistant-style interaction, and general-purpose NLP on NVIDIA RTX GPUs |

Optimizations

- FlashHead LM Head - lightweight replacement for the dense LM head, significantly improving throughput.

- vLLM Plugin Integration - compatible with vLLM (0.14.0+) via the

flash-headplugin.

Performance

Token Generation Speed (RTX 3500 Ada, batch size = 1)

| Precision | Tokens/sec | Speedup vs BF16 |

|---|---|---|

| BF16 baseline | 100 | 1.0× |

| FlashHead (Embedl) | 114 | 1.14× |

| W4A16 baseline | 206 | 2.06x× |

| FlashHead W4A16 (Embedl) | 271 | 2.27× |

FlashHead improves end-to-end speed by 1.32× over state-of-the-art, while maintaining full accuracy parity.

Measurement setup: vLLM 0.10.2, batch_size=1, prompt length=32, max_new_tokens=128, 10 warm-up runs, averaged over 100 runs.

Accuracy (Parity with Baseline)

| Method | MMLU-Pro | IFEval | BBH | TruthfulQA | GSM8K |

|---|---|---|---|---|---|

| Baseline | 0.38 | 0.24 | 0.45 | 0.47 | 0.13 |

| FlashHead | 0.38 | 0.25 | 0.45 | 0.47 | 0.12 |

FlashHead closely matches baseline accuracy.

Installation

pip install flash-head

The flash-head vLLM plugin is required. It activates automatically at startup.

Usage Examples

Note (vLLM context length): max_model_len=131072 may fail on GPUs without enough free VRAM for the KV cache. If you see a KV cache memory error, lower max_model_len (or increase gpu_memory_utilization).

vLLM Inference

from vllm import LLM, SamplingParams

from transformers import AutoTokenizer

model_id = "embedl/Qwen3-1.7B-FlashHead"

if __name__ == "__main__":

tokenizer = AutoTokenizer.from_pretrained(model_id, trust_remote_code=True)

messages = [{"role": "user", "content": "Write a haiku about coffee."}]

text = tokenizer.apply_chat_template(

messages,

tokenize=False,

add_generation_prompt=True,

enable_thinking=True,

)

sampling = SamplingParams(

max_tokens=1024,

temperature=0.6,

top_p=0.95,

top_k=20,

)

llm = LLM(model=model_id, trust_remote_code=True, max_model_len=131072)

output = llm.generate([text], sampling)

print(output[0].outputs[0].text)

Limitations

- Requires vLLM >= 0.14.0

- Currently optimized for NVIDIA RTX GPUs

Roadmap

Planned improvements:

- Advanced mixed precision quantization

- Huggingface transformers generation

- vLLM CLI benchmarking for detailed latency evaluation

lm-eval-harnessintegration for detailed accuracy evaluation- Upstream support in Transformers and vLLM

- Compatibility with GGUF, MLC, Llama.cpp, Ollama, etc.

- Broader model coverage (larger models, VLMs, VLAs)

License

- Upstream: Apache Licence 2.0.

- Optimized Components: Embedl Models Community Licence v1.0 (no redistribution)

Contact

Enterprise & Commercial Inquiries models@embedl.com

Technical Issues & Early Access https://github.com/embedl/flash-head

More Information & Model Releases https://embedl.com

Partner & Developer Opportunities

If you are evaluating on-device inference, building products on SLMs, or exploring custom model optimization, reach out for:

- Embedl SDK - AI optimization tools & profiling

- Embedl HUB - benchmarking platform

- Engineering support for on-prem/edge deployments

- Migration guidance (Llama / Qwen / Gemma)

- Early access & partner co-marketing opportunities

Contact: models@embedl.com

- Downloads last month

- 47