Turkish News Summarizer (IT5-Large)

This model has been trained on a corpus of 270,000+ real, non-synthetic, and exclusively human-written Turkish news articles and their corresponding summaries. The model is optimized to adhere to the morphological structure and formal register of the Turkish news language.

Dataset Specifications

Volume: 273,046 news articles and summaries.

Type: Authentic content derived from real-time news feeds, written by professional editors.

Domains: Politics, Economy, Sports, Technology, and General Agenda.

Training Parameters and Infrastructure

The training process was executed using high-end hardware and advanced optimization techniques:

Hardware: NVIDIA B200 (Blackwell) - 180GB VRAM.

Base Model:

gsarti/it5-large(740M Parameters).Precision: BF16 Mixed Precision & TF32 Core Acceleration.

Effective Batch Size: 160 (Per device batch: 80, Gradient Accumulation: 2).

Optimizer: AdamW (Fused Kernel).

Learning Rate: 4e-5 (with Cosine Decay Scheduler).

Process Optimization: Gradient Checkpointing enabled.

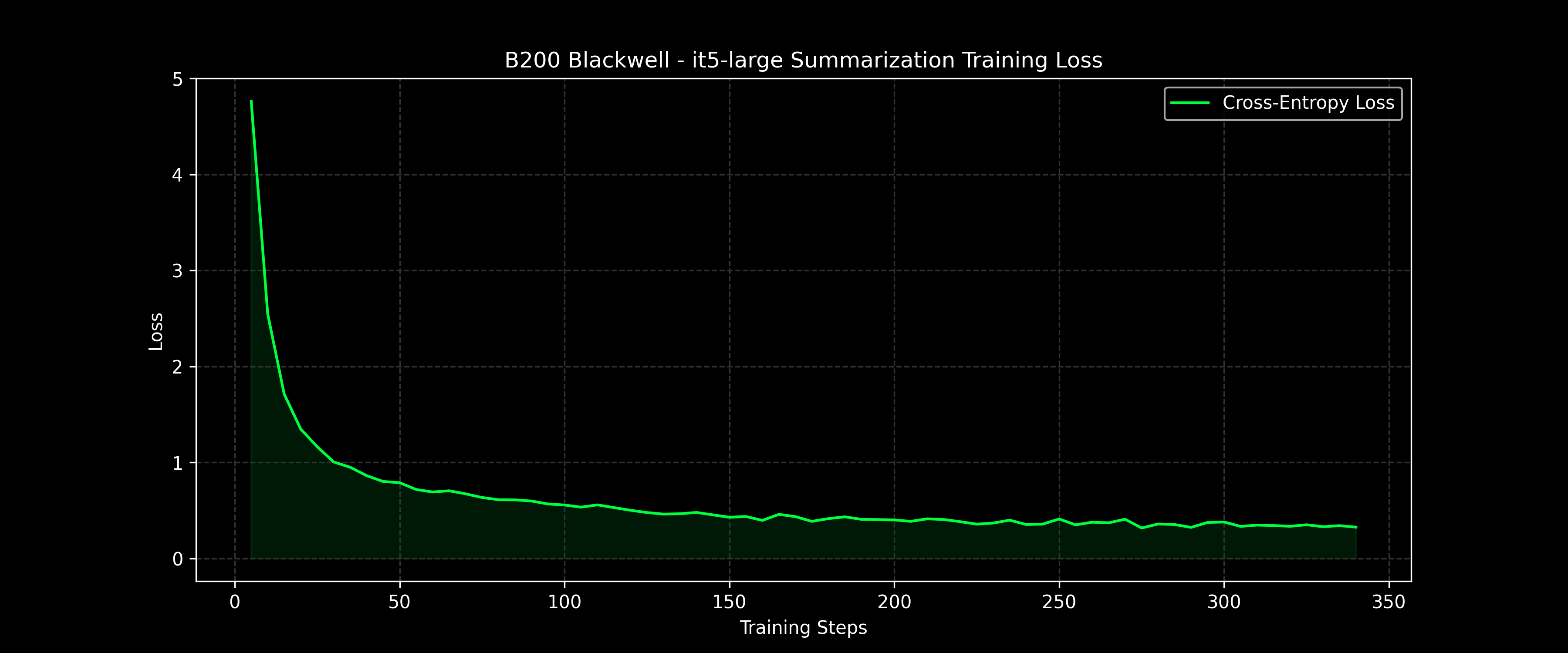

Training Metrics and Loss Curve

The following graph illustrates the convergence of the Cross-Entropy Loss during the training phase:

Inference Example

from transformers import T5TokenizerFast, AutoModelForSeq2SeqLM

tokenizer = T5TokenizerFast.from_pretrained("{REPO_NAME}")

model = AutoModelForSeq2SeqLM.from_pretrained("{REPO_NAME}")

def summarize_news(text):

input_text = "summarize: " + text

inputs = tokenizer(input_text, return_tensors="pt", max_length=1024, truncation=True)

outputs = model.generate(

inputs.input_ids,

max_length=150,

num_beams=4,

early_stopping=True

)

return tokenizer.decode(outputs[0], skip_special_tokens=True)

raw_text = "Insert news text here..."

print(summarize_news(raw_text))

License and Terms of Use

This model is released under the CC-BY-NC 4.0 license for research and development purposes. For commercial applications, the rights of the original data owners must be respected.

- Downloads last month

- 17